Life Is A Continuous Game of Tetris

A Futures Thinking Perspective

👋 Hello friends,

Thank you for joining this week's edition of Brainwaves. I'm Drew Jackson, and today we're exploring:

Underappreciated Uncertainties In Our World

Key Question: In which ways do our actions according to or against the certainties in life explicitly or implicitly affect our lives?

Thesis: While the vast majority of life is subject to minimal uncertainty, our tendency to avoid and overlook the parts that are subject to large-scale uncertainty (irreducible, complex uncertainties) can leave us open to being vulnerable to Black Swan-esque events.

Credit The Namibian

Before we begin: Brainwaves arrives in your inbox every other Wednesday, exploring venture capital, economics, space, energy, intellectual property, philosophy, and beyond. I write as a curious explorer rather than an expert, and I value your insights and perspectives on each subject.

Time to Read: 39 minutes.

Let’s dive in!

It was six men of Indostan

To learning much inclined,

Who went to see the Elephant

(Though all of them were blind),

That each by observation

Might satisfy his mind.

The First approached the Elephant,

And happening to fall

Against his broad and sturdy side,

At once began to bawl:

"God bless me! but the Elephant

Is very like a wall!"

The Second, feeling of the tusk,

Cried, "Ho! What have we here?

So very round and smooth and sharp?

To me 'tis mighty clear

This wonder of an Elephant

Is very like a spear!"

The Third approached the animal,

And happening to take

The squirming trunk within his hands,

Thus boldly up and spake:

"I see," quoth he, "the Elephant

Is very like a snake!"

The Fourth reached out an eager hand,

And felt about the knee.

"What most this wondrous beast is like

Is mighty plain," quoth he;

"'Tis clear enough the Elephant

Is very like a tree!"

The Fifth who chanced to touch the ear,

Said: "E'en the blindest man

Can tell what this resembles most;

Deny the fact who can,

This marvel of an Elephant

Is very like a fan!"

The Sixth no sooner had begun

About the beast to grope,

Than, seizing on the swinging tail

That fell within his scope,

"I see," quoth he, "the Elephant

Is very like a rope!”

And so these men of Indostan

Disputed loud and long,

Each in his own opinion

Exceeding stiff and strong,

Though each was partly in the right

And all were in the wrong!

So oft in theologic wars

The disputants, I ween,

Rail on in utter ignorance

Of what each other mean,

And prate about an Elephant

Not one of them has seen!

- Poem by John Godfrey Stone, based on a Hindu Parable

The future actively shapes our lives. Historically, the way humans have thought about and approached the future has been flawed. Futures Thinking is a modern approach to the future, rethinking how humans think about and approach the future.

Rather than trying to predict specific future events, Futures Thinking encourages a shift in how we conceptualize the future itself—drawing on diverse cultural perspectives, foundational world characteristics, deep modern literature reviews, and recognizing that our present actions and narratives significantly influence future outcomes. Since most major life decisions are essentially bets on the future, adopting this framework could transform how we approach education, careers, relationships, and other essential aspects of life.

Today, our discussion revolves around how our world is set up and how these underlying characteristics shape everything that goes on in the world, specifically focusing on Futures Thinking Tenet #4: The future is majorly—if not entirely—uncertain.

Credit Universitat Zurich

A CRITIQUE OF A FELLOW INQUISITOR WHO I ADMIRE DEEPLY - DEFINING THE LIMITS OF UNCERTAINTY - UNCERTAINTY SITS ON A SPECTRUM, ALONG WITH MANY OTHER COMMONPLACE FEELINGS

I wholeheartedly admire what Packy McCormick over at Not Boring has done: advancing the modern literature on tech companies through a casual, easy-to-digest format, yet not backing down from tackling the tough, gritty issues.

However, in reading one of his articles recently, The Return of Magic, I came across the following excerpt:

The future we were promised is finally here.

It feels like we’re approaching technology’s end game. The Fukuyaman “End of Technology.”

Of course, we still need to build the things. We need to give the curves time to play out. But there is growing consensus on what the future looks like.

We will use atomic and solar energy to power abundance, travel at greater speeds with greater levels of autonomy across the globe, colonize the Moon, then colonize Mars. With gene and cell therapies, we will first cure disease, and then expand what the human body can do. Our agents will do mundane work for us, while we do art or whatever. They’ll help accelerate progress in science and engineering, and speed up the inevitable arrival of Dyson Spheres and O’Neill Cylinders and fully compliant humanoid servants with whom we will communicate via our Brain Computer Interfaces.

On the surface, Packy’s simply describing how he believes/foresees that we’re moving into a time “where technology and magic coexist, where consciousness is as fundamental as matter, and where direct experience matters as much as double-blind studies.”

However well-intentioned, Packy, like many others throughout history—usually labeled under the term “futurists”—is attempting to make assertions about what the future will and won’t be like. As we’ll see throughout this article and the writings in Tenet #5, that’s much more easily said than done.

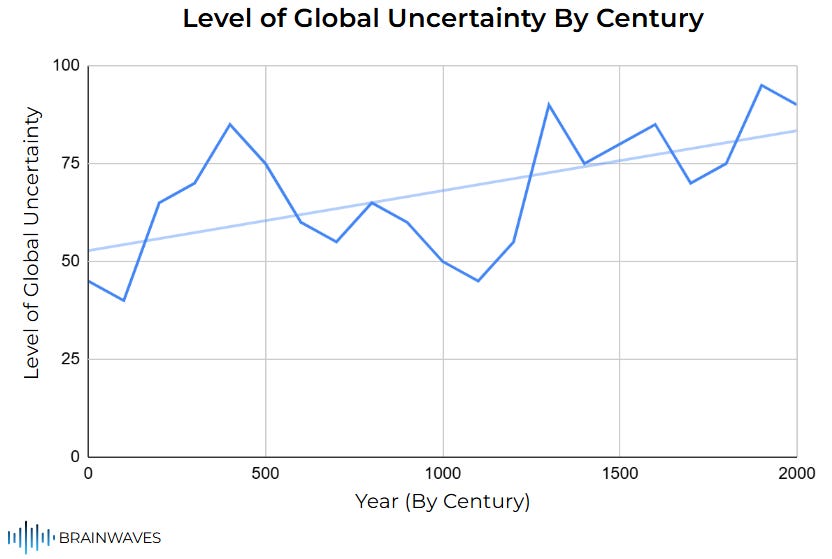

I specifically take issue with his sentence, “But there is growing consensus on what the future looks like.” In fact, I am going to claim the exact opposite: The future is the most uncertain it has ever been in the entirety of human history.

Here’s a sneak peek at what I mean, as summarized by century by Google’s Gemini AI (the trendline shown for ease of understanding):

Credit Gemini

Today, and in many areas throughout the Futures Thinking series, my goal is as Amar Bhide, author of Uncertainty and Enterprise: Venturing Beyond the Known, states, “I aim to stimulate inquiry into neglected questions about the role of uncertainty in human affairs and improve our understanding of how to manage it.”

Throughout our lives, in our literature, education, politics, and almost every facet of our existence, uncertainties are often overlooked. This is completely understandable; they’re concepts and topics that are difficult on the surface to ascertain.

We, as humans, fundamentally like to live in the black and white states of the world, where something is either one way or the other. We were primarily taught that way growing up, and have been ingrained to view the world through this dualistic lens.

Unfortunately, the majority of the world lives within the area between the two extremes, aptly dubbed the “gray area”. There are very few rules and characteristics describing this section of the world, of our lives, but one constant emerges throughout: uncertainty.

As the Oxford Language dictionary defines it, the gray area is an ill-defined situation or field not readily conforming to a category or to an existing set of rules. In many cases, our world is ill-defined (as the future has not presented itself yet - or else it wouldn’t be called the future). In others, it’s a new field in which we don’t have rules or an understanding yet.

As the Collins Dictionary adds, this gray area is “an area or part of something existing between two extremes and having mixed characteristics of both”. To foreshadow, we’ll talk deeply about the spectrum of life, and how most of it sits in the gray area between the two extremes (true certainty and true uncertainty).

To begin discussing the characteristics of this “gray area”, we must first start on a level playing field regarding the definitive boundaries of uncertainty and its related terminology. Throughout my readings and discoveries on this topic, I’ve seen that each philosopher, businessman, and everyday human has a slightly different definition of the word uncertainty, and as such, for us to have a productive discussion today, we must come to a common working consensus.

In a business context, the most famous and cited “father” of the idea of integrating uncertainty into our enterprise thought processes would undoubtedly be Frank Knight in his 1921 work Risk, Uncertainty, and Profit. Within this, he posited the difference between “uncertainty” and “risk”.

Knight defined risk as “measurable uncertainty”, referring to situations where the probabilities of different outcomes are known or can be objectively calculated. In contrast, Knight defined uncertainty here as “true uncertainty” or “unmeasurable uncertainty”, referring to situations where the probabilities of different outcomes are unknown and cannot be objectively measured or calculated.

Knight’s thesis is much more practical for the business world (a subject of a lengthy future article), but it does provide a solid foundation from which to build our definitions today.

John Maynard Keynes, one of the most influential modern economists, elaborated on Knight’s thesis in his 1936 General Theory, summarized by Amar Bhide as follows:

By “uncertain” knowledge, let me explain, I do not mean merely to distinguish what is known for certain from what is only probable. The game of roulette is not subject, in this sense, to uncertainty; nor is the prospect of a Victory bond being drawn. Or, again, the expectation of life is only slightly uncertain. Even the weather is only moderately uncertain. The sense in which I am using the term is that in which the prospect of a European war is uncertain, or the price of copper and the rate of interest twenty years hence, or the obsolescence of a new invention, or the position of private wealth-owners in the social system in 1970. About these matters, there is no scientific basis on which to form any calculable probability whatsoever. We simply do not know.

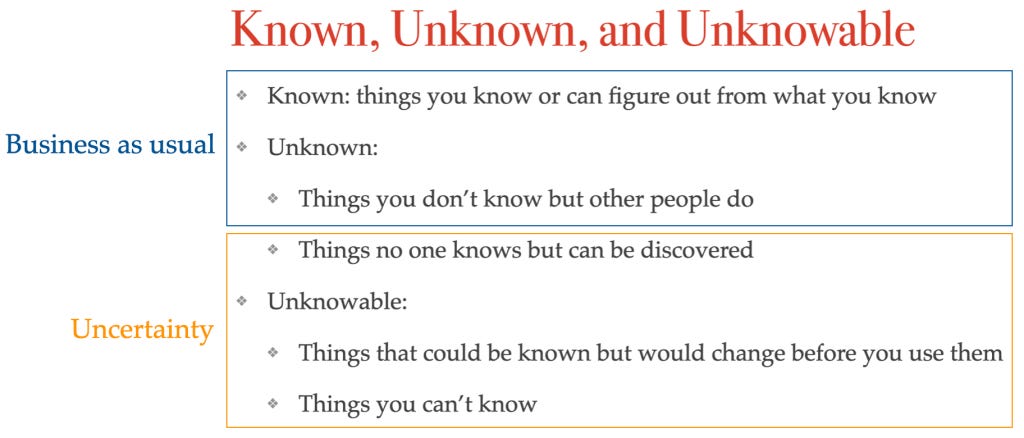

Since then, hundreds of economists, philosophers, and strategic thinkers have refined that definition, culminating in, in my opinion, the “best” working definition, as summarized by Jerry Neumann, author of the Reaction Wheel newsletter:

Credit Reaction Wheel

In his model, Neumann posits 5 “layers” of uncertainty and knowledge:

Layer 1: The things you know or can figure out from what you know. Examples of things in this layer would be the date of your birthday, the capital of your country, the city you live in, the sum of 2 + 2, the number of eggs in a dozen, how much money is in your wallet, what you ate for breakfast, the last time you took a shower, or the exact number of steps it would take you to walk a mile.

Layer 2: The things you don’t know but other people do. Examples of things in this layer would be the exact profit margin of a public company last quarter, the current inventory level of a product at a large retail chain, the best way to fix a plumbing issue in your house (if you’re not a plumber), the answer to a complicated trivia question, or the exact number of attendees at the last Super Bowl.

Layer 3: The things no one knows but can be discovered. Examples of things in this layer would be the cure for cancer, the best planet in the universe to sustain human life, a new archeological site or lost city, the next big technological breakthrough (e.g. the AI bubble if you were in 2018), the precise sequence of events leading to a crime that has just occured, or whether a new pharmaceutical compound will be effective in human trials.

Layer 4: The things that could be known but would change before you use them. Examples of things in this layer would be the exact price of a highly volatile stock if you’re trying to execute a trade based on a price you saw a few minutes ago, the exact traffic conditions on a busy highway several hours from now, the current exact location of a free-roaming wild animal in a vast preserve, the global financial market sentiment years from now, or the precise amount of water flowing through a river during the onset of a flash flood.

Layer 5: The things nobody can know. Examples of things in this layer would be the exact moment and circumstances of the deaths of 95%+ of humans, the thoughts or conscious experience of another person in their entirety, what you will dream about every night, or the ultimate fate of the universe in billions of years.

To dive into this framework further, the unknown consists of both things other people know that you don’t (Layer 2) and of things that no one knows yet but can be known at some point (Layer 3). Knowledge that other people know that you don’t know can easily be garnered, especially in the 21st-century internet age.

The second type of unknown knowledge, the knowledge contained in Layer 3, is slightly more complicated; if you want the knowledge, you have to go out and create it. The knowledge is knowable, it’s just that nobody knows it yet. This is described as “fundamental uncertainty”, a term pertaining to situations in which the information does not exist at the time of the decision. As Neumann puts it, this is the first type of uncertainty: novelty uncertainty.

Novelty uncertainty is when there are things you just don’t know, even after doing all of your research and thinking things through to their logical conclusions. Neumann explains it as follows:

I call it novelty uncertainty because it is especially common when someone does something for the first time. Doing the thing creates the knowledge, so this kind of uncertainty is mitigated through doing things. Early explorers may have had no way to predict what was across the ocean, but once they sailed there, they knew.

The unknowable is knowledge you can get, but is subject to constant change (Layer 4), and knowledge that simply cannot be gotten (Layer 5); unknowable means you can’t know it, at least not for good. This type of knowledge often happens when things are changing. For instance, you might try something and see what happens, but the next time you do the same thing, something different happens.

Herbert Simon, another notable economist, discussed these properties of uncertainty, which “includes unknown unknowns—possibilities that we cannot imagine—as well as known unknowns.”

Neumann calls this complexity uncertainty, a symptom of the unpredictable change that happens due to the interactions of complex systems. As we’ve discussed throughout Tenet #1, #2, and #3, complex systems govern our world, and their interactions can lead to a variety of mixed effects with exponential outcomes.

Furthermore, in the literature surrounding the concept of uncertainty, you’ll sometimes hear the phrases “reducible uncertainty” and “irreducible uncertainty.”

The concept of reducible uncertainty, also known as “epistemic uncertainty,” refers to Layers 1-3, which arise from a lack of knowledge or information about a system or part of the world. It’s the uncertainty that we could reduce by collecting more data, conducting more research, improving our models, or refining our understanding.

The concept of irreducible uncertainty, also known as “aleatoric uncertainty,” refers to Layers 4-5, which arise from the inherent randomness or variability within the world. It’s the uncertainty that cannot be eliminated, no matter how much data you collect or how much you refine your models, because it’s a fundamental property of the system.

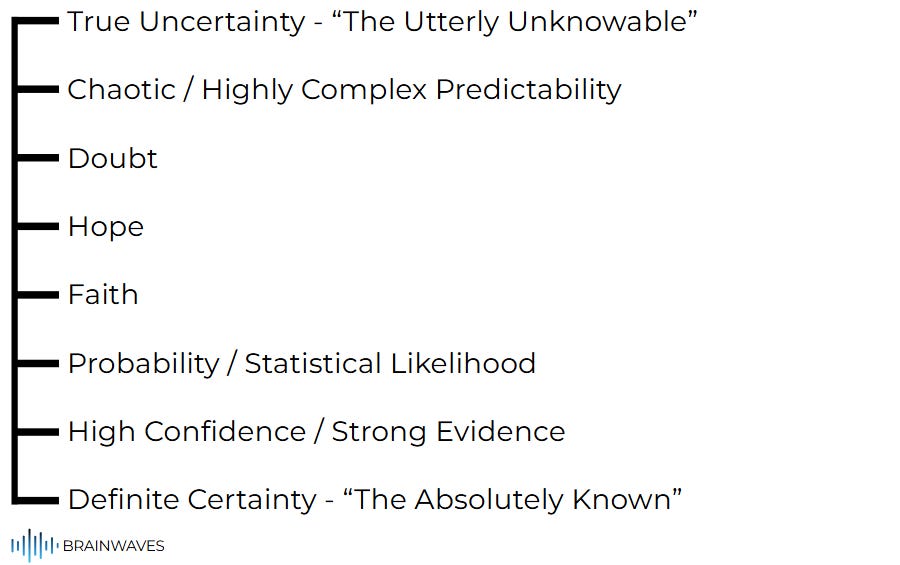

Perhaps you’re flummoxed by most of the above, like I was the first time I dove into this subject. It’s an incredibly complex topic, but one that’s integral to the concept of Futures Thinking. What helped me was thinking of the various concepts in our world as a spectrum, ranging from certainty on one end to uncertainty on the other:

Again, each person reading this will have their individual definitions and intuitions surrounding each of the terms listed on this spectrum. Despite any preconceived notions you may have, I hope this framework continues to provide value.

Diving deeper into each item on the spectrum, we can see the complex balance between the intensity of uncertainty and certainty within each term:

True Uncertainty: As already discussed, this is the realm of things that are fundamentally unknowable, no matter what we do, those comprising Layer 5 in our above framework.

Chaotic / Highly Complex Unpredictability: Matters in this realm are still very uncertain; however, there might be some resemblance of knowledge here. This bucket aligns closely with Neumann’s idea of complexity uncertainty and is similar to those things in Layer 4 of our framework.

Doubt: Doubt is a state of mind where there’s a lack of conviction or a hesitation due to insufficient evidence or conflicting information. It’s the personal experience of being in a state of some level of reducible uncertainty where you don’t have the knowledge or the answer, but you believe the answer could exist. Doubt usually lies on the border between Layer 4 and 3.

Hope: Hope emerges when there are significant levels of uncertainty, but a positive outcome is desired and considered possible. It’s a psychological stance in the face of the unknown, often leaning towards the positive even without strong evidence. Hope usually exists in the space of novelty uncertainty (Layer 3).

Faith: Faith is a stronger conviction or belief (than hope) in something in the absence of complete empirical proof. It often implies trust or confidence in a system, a deity, or an outcome, even when the data is incomplete or the future is uncertain. It bridges the gap between the known (Layer 2) and the unknown (Layer 3), often in areas where true certainty is elusive or impossible through empirical means.

Probability / Statistical Likelihood: Probability exists in the realm of measurable uncertainty—which Knight would define as risk. While individual outcomes are not certain, we can quantify the likelihood of different events (through statistical probabilities). This is where subjects like actuaries, forecasting, and data-driven prediction exist (Layer 2).

High Confidence / Strong Evidence: This point on the spectrum signifies a strong belief in an outcome or fact, backed by substantial, reliable evidence, though not an absolute proof beyond all doubt. It’s still governed by reducible uncertainty, but most of the uncertainty has already been removed, hence the high confidence.

Definite Certainty: The “opposite” of true uncertainty, this is the realm of facts which are undeniable, universally accepted, and empirically verifiable or logically irrefutable. This is the realm of Layer 1.

Everything within our lives sits in one of three places: (1) it expressly sits somewhere on this spectrum (i.e., it exists in the realm of probability), (2) it sits between multiple items on this spectrum (i.e., it is subject to hope and doubt), or (3) it affects all of the items on this spectrum.

For instance, I’ve always been fascinated by the concept of curiosity and our motivations for being curious. Curiosity flourishes in the middle of this spectrum (in the realm of Doubt and Hope). It’s the engine that drives us to move from “not knowing” towards “knowing.” It’s a desire to reduce uncertainty, or at least to understand the nature of the concept at hand further. As Jenny Odell writes in How to Do Nothing: Resisting the Attention Economy, “Curiosity, something we know most of all from childhood, is a forward-driving force that stems from the differential between what is known and not known.”

To offer another example, the concept of confidence spans the entire spectrum. It’s low in True Uncertainty and Chaotic Unpredictability; it’s what we lack in Doubt; it’s a desired state in Hope; it’s a foundational element in Faith; it becomes quantifiable in Statistical Likelihood; it’s strong and evidence-based in High Confidence; and it’s an absolute in Definite Certainty.

Lastly, the concept of impossibility acts as a boundary or a perceived limit on the spectrum. In True Uncertainty, something might be truly impossible to know. At the Definite Certainty end, impossibility defines what cannot be true given what we know (e.g., it’s impossible for a square to be a circle). Throughout the spectrum, what’s “impossible” can shift over time. What was once deemed impossible due to a lack of knowledge (e.g., human flight, space travel) became possible. Thus, the idea of impossibility often marks the current boundary of human knowledge or capability.

Credit Recalbox

LIFE IS MUCH MORE LIKE TETRIS THAN CHESS - UNCERTAINTY IS UNIQUE AND SUBJECTIVE - WE UNCONSCIOUSLY SIMPLIFY, YET THAT HURTS US IN THE LONG RUN

Noah Rasheta, over at the Secular Buddhism Podcast, often talks about how he thinks life is best compared to a game.

Traditionally, we often approach life as we would a game of chess - strategic, planned, thoughtful, with every move carrying a significant weight of consequences. As such, we have expectations all the time for how life will be (we are relatively certain about what’s to come - or we have hope/faith/doubt). However, as Noah writes, our lives are much more like the game of Tetris:

I recently read an article that was circulating on Facebook that I really liked that said, “Life is like a Tetris game and we need to quit playing it like it's a chess game.”

I thought, how appropriate. That really is a healthy way of viewing life. It’s like Tetris. You’re playing and then objects present themselves and you never know in what configuration. The whole purpose of the game is learning to take what presents itself and arranging it or twisting it in a way that works best, and it’s never ideal because you have to position it wherever it’s going to fit. Even if that’s not ideal, it might be the most ideal that you were able to work with in the time that you had. Let go of the expectations of what life should be. Quit playing the chess game and learn to see life like Tetris.

If we take Noah’s premise as fact, this would imply life is much more uncertain than we traditionally think it is.

If this is truly the case, where does all of this uncertainty throughout life come from?

While there are many niche, individual factors that contribute and combine into the uncertainties we find throughout life, they can be distilled down into 4 main factors:

Inherent Randomness

Lack of Knowledge / Incomplete Information

Complexity and Interconnectedness

Human Behavior and Decision-Making

Inherent randomness is the portion of uncertainty talked about in Layer 5, the irreducible uncertainty present throughout our system. It’s not about the lack of knowledge or ability to obtain knowledge, but rather a fundamental unpredictability present in nature. We’ll majorly skip over this topic as it will be discussed at length throughout Tenet #5.

Uncertainty derived from the lack of knowledge or a level of incomplete information is what we discussed in Layers 2 & 3. This is uncertainty that could be reduced if we had more information or a better understanding of the situation at hand. There are two parts to this: missing information and novelty.

Missing information refers to things we don’t know, but others do, or when the information exists but we haven’t accessed it yet. Examples of this could be data gaps (insufficient data to build robust models or make confident predictions), hidden information (intentional withholding of information by individuals, organizations, or governments), or information overload / intentional information noise (so much information exists that identifying the relevant pieces becomes a challenge).

Novelty, as previously discussed, refers to things that no one knows yet but can be discovered in the future. Examples of this could be scientific frontiers, unexplored territories, or innovation (the outcome of new technologies, business models, or creative works before they exist).

Our discussions throughout Tenet #1, #2, and #3 largely surrounded the complexity and interconnectedness of our world, a key cause of the uncertainty in the world. As systems become more complex and interconnected, their behavior causes cascading effects which can blur the outcome lines between what’s knowable and unknowable.

As discussed, the properties of the world that contribute to this complexity and therefore to uncertainty are the emergent properties of the world (the whole is greater than the sum of the parts), feedback loops (amplifying changes into large, uncertain outcomes), interdependency (events can have unforeseen ripple effects elsewhere in the world), and adaptive systems (learning, adapting, and evolving in response to changes in the world, discussed more in Tenet #6).

Lastly, humans are a primary source of both reducible and irreducible uncertainty. People don’t always act rationally or predictably, with emotions, biases, and limited information often influencing decisions. Furthermore, the concept of free will introduces fundamental unpredictability in each individual choice. Aggregated together, the decisions of billions of people can lead to emergent phenomena that are hard to foresee. Finally, intentional actions by human actors can be hard to predict or influence, including wars, policy changes, protests, and entrepreneurial ventures.

It’s my opinion that the world is much more uncertain than we would give it credit for. This theory is based in part on my unique experience of the world, the foundational principles of the world we’ve discussed throughout Tenet #1, #2, and #3, and the writings of those infinitely more experienced than I in contemplating these matters.

Why might people perceive more certainty than truly exists in life?

Bringing in many of the themes from Tenet #3, if we simplify life down (similar to zooming into an exponential curve to see it as linear), this rules out some sources of uncertainty in life.

By simplifying life as such, we essentially ignore or downplay sources of uncertainty that are too distant, too complex, or too slow-moving to impact our immediate decision-making. We focus on the reducible uncertainties that our current tools and knowledge can handle while setting aside the irreducible uncertainties (a tragic occurrence which will only plague us in the long run).

Nassim Taleb’s book, The Bed of Procrustes, summarizes the central problem: "we humans, facing limits of knowledge, and things we do not observe, the unseen and the unknown, resolve the tension by squeezing life and the world into crisp commoditized ideas."

Taleb’s comment about the “things we do not observe, the unseen and the unknown” refers to the hidden variables, the non-linear interactions (discussed in Tenet #3), and the distant ripple effects that our simplified models or immediate perceptions fail to capture—those factors contained in Layers 3-5.

The core of our simplification strategy is “squeezing life and the world into crisp commoditized ideas.” We categorize and label things in our world, even if they are continuous or fuzzy, to make them discrete and manageable. We create models, scientific theories, economic models, and business plans that simplify reality. We tell ourselves stories that make sense of chaotic events, often imposing linearity and cause-and-effect where there might be none. We focus on averages and normal distributions, ignoring the fat tails or extreme outliers that can cause major disruption.

It’s due to these factors, among others, that people perceive more certainty than truly exists in life.

These factors provide a “functional certainty” that allows us to operate in the world precisely because we apply these simplifications. For most daily tasks, these “crisp commoditized ideas” are good enough, providing the necessary functional certainty to navigate life. In a sense, our brains and societal systems “ignore” certain sources of uncertainty for the sake of efficiency and sanity.

This drive for certainty largely comes from our “local”, “zoomed in” experience of the world, a view focused on the day-to-day, the immediate environment, and short-term plans. When we zoom out to the “global” view (a long-term, systematic, interconnected view), the uncertainties become much more apparent. Unfortunately, in the day-to-day of our lives, the “local” view primarily dominates our experience (leaving us vulnerable to uncertain blindspots).

Taleb’s quote explains how we make uncertainty manageable. We don’t eliminate it, but we transform it through cognitive and systematic simplification into something we can work with.

Our efforts to make uncertainty manageable highlight yet another property of uncertainty: its subjectivity. In his book, Amar Bhide discusses how uncertainty is “a personal (‘subjective’) mental state that covers future events that no one can observe before they occur.”

Two different people can look at the exact same situation and have different levels of subjective uncertainty about its outcome. For instance, using an example from our layering discussion above, the knowledge of the best way to fix a plumbing issue in your house would be a Layer 2 uncertainty for most people, but for a plumber, it would be a Layer 1 uncertainty. This is why decision-making under uncertainty often feels less like a calculation and more like a leap of faith.

Yet, despite its subjectivity and our attempts to manage it, our reactions to uncertainty—while they, in theory, allow us to live “easier” lives—cause other, hidden consequences. As Taleb writes in The Black Swan, the simplifications and reductions that we do to the world have negative consequences for our perspective of the world: “Any reduction of the world around us can have explosive consequences since it rules out some sources of uncertainty; it drives us to a misunderstanding of the fabric of the world.”

We’ll discuss the impact of the level of information we have later on in this article, but this hints at the fact that our reactions to uncertainty—simplifying the world—disregard some of that uncertainty, leaving us vulnerable to Black Swan events and other blindspots.

Credit Aesthetic Dental

THE SIMPLE ANSWERS SUPPLIED BY RELIGIONS SATISFY OUR UNCERTAINTY CRAVINGS - OUR DAY-TO-DAY EXPERIENCE IS FULL OF TRANSITORY CERTAINTIES - FEAR OF UNCERTAINTY DRIVES US TO FIND CERTAINTY WHERE THERE MAY NOT BE ANY

Humans are always craving certainty. As Susan Cain writes in Quiet: The Power of Introverts in a World That Can’t Stop Talking, humans have many carnal, innate needs, “for love, certainty, variety, and so on.”

Our brains are prediction machines; they thrive on patterns, order, and predictable outcomes. Uncertainty, especially deep, irreducible uncertainty, triggers anxiety, stress, and discomfort.

From an evolutionary perspective, predicting danger was crucial for survival; those who were unable to predict and stay away from danger died. This innate drive for certainty is deeply ingrained.

This is what draws many people to organized religions: the opportunity to get questions they have answered. Rephrased, religions provide certainty to uncertain issues.

Religions, especially Western religions, often provide answers to questions that fall into our Layer 5 bucket of “things nobody can know”, an area of extreme uncertainty that often defies empirical or logical proof.

Where did life start? Science offers theories (the big bang, evolution), but often leaves many questions unanswered: “how did it start from nothing?” or “why this particular universe?” Religions provide creation narratives that offer definitive answers.

The question of “why are we here?” is deeply uncertain. As such, many humans live their lives craving the answer, craving some meaning in their lives through this secret. Religions often provide a clear, generally divinely ordained purpose.

Another, hilariously paradoxical quandary that faces people is the question of death. See, the only true certainty in life (according to many religions, spiritual traditions, and philosophies) is that everyone will die. Given this, many struggle to grasp what happens after they (or loved ones) die. Religions offer detailed, often comforting, certainties about an afterlife, reincarnation, or spiritual continuation.

Religions offer weary inquisitors a path to transform deep, subjective uncertainty into a form of faith (between hope and probability on the spectrum). This faith, for adherents to most religions, becomes a form of certainty, a conviction held despite the absence of empirical proof—as described in Sam Harris’s book The End of Faith. These religious answers, while providing certainty, could easily be seen through Taleb’s lens as “squeezing life and the world into crisp commoditized ideas.”

Christophe Andre, in his book Looking at Mindfulness: Twenty-Five Paintings to Change the Way You Live, provides the following quote from Paul Valery, a French poet and philosopher, "The mind flits from one silliness to the next, as a bird flits from branch to branch. It can do nothing else. The main thing is not to feel stable on any one of them." As Andre puts it, “Our minds need transitory certainties, just as birds need branches.”

Similar to the concept of religion providing answers to critical uncertainties in our lives, our minds within themselves search for answers, dubbed “transitory certainties.” Our minds, in their constant processing of information and decision-making, don’t reside in a state of absolute, unchanging certainty about everything; instead, our minds operate by constructing and moving between temporary, functional certainties.

In essence, these transitory certainties are the operational certainties of daily life. They are what allow us to get things done, to make decisions, and to avoid being overwhelmed by the sheer volume of things we don’t, can’t, or won’t know. They bridge the gap between the innumerable certainties of the physical world and the ever-shifting landscape of the unknown.

It’s through these transitory certainties that we literally believe the world is more certain than it actually is (proving the theory I introduced above). Susan Cain highlights this powerfully in her book:

Similarly, in his book on the run-up to the 2008 crash, The Big Short, Michael Lewis introduces three of the few people who were astute enough to forecast the coming disaster. One was a solitary hedge-fund manager named Michael Burry who describes himself as "happy in my own head" and who spent the years prior to the crash alone in his office in San Jose, California, combing through financial documents and developing his own contrarian views of market risk. The others were a pair of socially awkward investors named Charlie Ledley and Jamie Mai, whose entire investment strategy was based on FUD: they placed bets that had limited downside, but would pay off handsomely if dramatic but unexpected changes occurred in the market. It was not an investment strategy so much as a life philosophy---a belief that most situations were not as stable as they appeared to be. This "suited the two men's personalities," writes Lewis. "They never had to be sure of anything. Both were predisposed to feel that people, and by extension markets, were too certain about inherently uncertain things.

Our cravings for certainty—whether satisfied by religion, transitory certainties, or another form—can blind us to the truth of reality: that life is much more uncertain than we would think. This blind view of reality can leave us exposed to uncertain, powerful events (similar in nature to the 2008 stock market crash).

Besides religion and transitional certainties, how does this dynamic of craving certainty play out throughout the real world?

As previously touched on, people are often too certain about uncertain things. This could also be called the “faith effect” or the “hope effect.” This phenomenon is largely due to a range of deeply ingrained cognitive biases and fundamental psychological needs that drive us to seek, create, or maintain a sense of certainty.

Much of this is due to the innate fact that humans fear uncertainty. Our brains are essentially hardwired to react with fear to uncertainty. In a recent neurological study, a Caltech researcher took images of people’s brains as they were forced to make increasingly uncertain bets. The less and less information the subjects had to go on, the more irrational and erratic their decisions became. As the uncertainty of the scenarios increased, the subjects’ brains shifted control over to the limbic system, the place where emotions such as anxiety and fear are generated.

As Noah Rasheta, over at the Secular Buddhism Podcast, puts it, “the problem isn’t that there is uncertainty in life, the problem is that we’re not okay with the uncertainty that there is in life.” To put it simply, our fear of uncertainty pushes our minds to crave certainty (even when there isn’t any to be found), so we search out places of certainty, such as religion, transitory certainties, or elsewhere.

In our efforts to manage uncertainty, through our craving and progression towards certainty, the temporal dimension is a crucial topic to highlight briefly (we’ll discuss it much more in Tenet #11).

By definition, true uncertainty is always a factor of the future. You cannot have uncertainty about the past (though you can have doubt about what happened if the information is missing, contradictory, or otherwise unyielding).

Once the event has occurred, the uncertainty is resolved (for that specific instance), and knowledge is created (shifting the knowledge/uncertainty threshold from Layer 3 to Layer 1 or 2).

As such, our cravings for certainty always revolve around the future, and as such, are an integral viewpoint to understand if we’re going to address how we think about and manage our mindset towards the future (the core concept/goal of the Futures Thinking series).

Don’t worry, we’ll enter the whole temporal continuum much more in depth later (I’ll spare you the gory details for now).

Credit Nuclear Energy Agency

HOW DO WE KNOW WHAT WE KNOW? - THE LEVEL OF INFORMATION USUALLY TRANSLATES TO OUR LEVEL OF UNCERTAINTY - DEALING WITH “ONE-OFFS” IS INCREDIBLY COMPLICATED, AS THERE IS NO PLAYBOOK

The movie 21—an above-average heist film focusing on advanced mathematics, gambling, and poetic justice—uses the example of the 3 door game show problem (also known as the Monty Hall problem). In the game show, a contestant is given the opportunity to pick between 1 of 3 doors. Behind one door lies a brand new car; behind the other two, goats. The contestant begins by picking a door. The host then opens a different door, revealing a goat. Then the contestant is given the opportunity to switch to the remaining closed door.

If you were the contestant, should you switch or stay?

You’ve probably heard about this problem, so you know you should switch (if you don’t understand the logic behind this, here’s some helpful information). The key to the problem: the value of missing information, showcased when that information is revealed.

Unfortunately for our discussion today, the Monty Hall problem isn’t governed by uncertainty; instead, it’s governed by the realm of risk and statistical probabilities. However, it does provide a solid basis for the value of information within this realm.

How do we know what we know?

Ambiguity is such an intriguing word, defined by the Oxford Languages Dictionary as “the quality of being open to more than one interpretation.” In other words, ambiguity could be a measure of the completeness and certainty of the information present.

Amar Bhide writes about ambiguity in our context, defining it as “known-to-be-missing information, or not knowing relevant information that could be known.” In this case, ambiguity would be a property of Layers 2, 3, and 4.

We all interpret information differently, giving more weight to some pieces of information over others. Bhide describes these as “unambiguous observations”, otherwise known as “objective” facts, what people in a crime drama would label as “hard evidence.” Bhide elaborates:

For example, in criminal investigations, closed-circuit video recordings of a suspect’s movements carry more weight than the recollections of an elderly eyewitness. Similarly, in medical trials, blood tests and biopsies are more persuasive than patients’ self-reported feelings of wellness.

The omission of these unambiguous observations creates the opposite effect, where the lack of information leads to doubts. Bhide states:

For example, in a murder trial, forensic evidence that places a suspect at the scene of the crime is relevant, doubt-reducing information, and placebo results are relevant in drug trials. Self-evidently, the absence of forensic evidence or placebo results increases doubts. However, missing—yet objective—information about a suspect’s zodiac sign should not affect a reasonable juror’s doubts.

In most cases, more missing information increases doubts. Relating to the broader discussion, often, more evidence raises confidence toward certainty, and the absence of evidence pushes doubt toward uncertainty. Realistically, we expect evidence to reduce rather than increase mistakes.

Doubts are an integral part of the completeness of information. As Amar Bhide writes, “Doubts about correctness should be the logical default. True and certain knowledge, according to ancient Greek and Indian skeptics and many seventeenth- and eighteenth-century thinkers, is impossible.”

Similarly, the philosopher Hume states that all worldly matters of fact warrant some doubt. In his opinion, we can never be absolutely certain about natural events (earthquakes, volcanic eruptions) or human efforts (knitting, technological development, landing on the moon). Now, if you’re like me, you might be expressing some doubt about the extent of Hume’s theorizing, but that’s beside the point.

Bhide writes that uncertainty is “doubt produced by missing information,” but expressly highlights that this definition is incomplete as it excludes unknown unknowns (Layer 3) and unimaginable possibilities (Layer 5).

In some cases, complete certainty isn’t absolutely necessary. In some cases, missing information is fine and sometimes even helps with the issue. For instance, successful “beyond a reasonable doubt” prosecutions do not remove all possible doubt about guilt, just enough to make people feel confident enough about convicting (although if you asked many jurors, they would say they were “completely certain” - even though we both know that there’s no way (given our layers of uncertainty) that they are absolutely 100% certain).

Likewise, where there is doubt, there is the possibility of disagreements. If you’ve watched any trial portrayal in a film or a TV show, you’ve probably seen how jurors in a complicated trial can passionately disagree when any level of doubt is present. This is what makes watching these so exciting: the certainties and uncertainties (Layers 1-4) present in each one of them (and everyone else in the show).

Many times, the passionate disagreements portrayed in these cinematic masterpieces (usually framed through “trial of the century”), are simply “one-offs.” In this context, the phrase “one-offs” refers to an event, situation, system, or world predicament that is unique, specific, and often non-repeatable in the exact same way.

Because a one-off is so specific and unique, it makes it difficult to apply broad generalizations or objective probabilities. In the ideology of Packy McCormick, there is no playbook that accurately and completely explains how to deal with these situations. It is here where subjective uncertainty and doubt come into play.

Doubts often have contextual or specific (“one-off”) targets, such as whether a patient has heartburn or whether they have an acute type of cancer. Similarly, in reducing doubts about “one-offs”, contextual evidence is pivotal.

This highlights, arguably the most important part of the Futures Thinking series: if you truly consider the extent of the “one-off” theory to its absolute extent, you’ll find that every single event in our world since the beginning of time to this very moment is technically a “one-off” and, as such, is subject to high levels of irreducible uncertainty.

Stephen Bachelor characterizes this perfectly in his book Buddhism Without Beliefs: A Contemporary Guide to Awakening:

To dwell in unknowing perplexity before the breath, the rain, a chair is much the same as dwelling in unknowing perplexity before an unformed lump of clay, a blank sheet of paper, and empty computer screen. In both cases we find ourselves hovering on the cusp between nothing and something, formless and form, inactivity and activity. We are poised in a still, vital alertness on the threshold of creation, waiting for something to emerge (the next in-breath or the first tentative shaping of the clay) that has never happened in quite that way before and will never happen in quite that way again.

Credit Risk Ledger

THE FALL OF THE SOVIET EMPIRE AND 9/11 ILLUSTRATE THE TRUE IMPACT OF UNINCORPORATED UNCERTAINTIES - TALEB’S CONCEPT OF A BLACK SWAN EVENT - PERCEIVING CERTAINTY WHEN THERE IS UNCERTAINTY LEAVES US VULNERABLE TO BLACK SWAN EVENTS

In 1985, Mikhail Gorbachev was elected as the General Secretary of the Politburo. He aimed to revive the stagnating Soviet economy, devastated after two world wars and the long-standing nuclear standoff with the United States.

Gorbachev’s policies of openness and restructuring inadvertently accelerated the USSR’s demise. The policy of openness unleashed a pent-up public discontent and nationalist aspirations by allowing a greater freedom of expression, while the restructuring policy led to chaos and shortages rather than revitalization.

Gorbachev’s 1989 decision to abandon military intervention in Eastern Europe further emboldened independence movements throughout the broader regions of the Soviet Republic.

In 1991, a failed Communist coup dramatically weakened central authority, prompting most republics, led by Boris Yeltsin’s Russia movement, to declare full independence.

By December of 1991, the formation of the Commonwealth of Independent States by Russia, Ukraine, and Belarus effectively dissolved the Soviet Union, leading to Gorbachev’s resignation and the fall of the Soviet Union.

Only two years later, the world was shocked yet again by another global calamity.

On September 11th, 2001, 19 hijackers seized control of four commercial passenger jets, performing a series of devastating terrorist attacks orchestrated by al-Qaeda.

Two planes were deliberately crashed into the North and South towers of the World Trade Center in New York City, causing both towers to collapse within hours, causing global panic.

A third plane struck the Pentagon, leading to a partial collapse of the western side of the building. The fourth plane crashed into a field after passengers and crew fought back against the hijackers.

Overall, the attacks resulted in the deaths of nearly 3,000 people, making it one of the deadliest terrorist attacks in history.

The link between the two? Both were Black Swan events.

The phrase “black swan” is derived from 2nd-century Romans who talked about “a bird as rare upon the earth as a black swan.” This phrase became a common expression for a statement of impossibility; however, in 1697, Dutch explorers stumbled across the first known sightings of a black swan out in the wild.

Subsequently, the term came to note the idea that a perceived impossibility might later be disproved. This term was popularized in modern culture by Nassim Taleb through his series of books and literature on the subject, culminating in his most popular work, The Black Swan.

As Taleb describes them, Black Swan events exhibit the following attributes:

First, it is an outlier, as it lies outside the realm of regular expectations, because nothing in the past can convincingly point to its possibility. Second, it carries an extreme 'impact'. Third, in spite of its outlier status, human nature makes us concoct explanations for its occurrence after the fact, making it explainable and predictable.

I stop and summarize the triplet: rarity, extreme 'impact', and retrospective (though not prospective) predictability. A small number of Black Swans explains almost everything in our world, from the success of ideas and religions, to the dynamics of historical events, to elements of our own personal lives.

Taleb found the term and the callout of such Black Swan events as a way to explain:

1) The disproportionate role of high-profile, hard-to-predict, and rare events that are beyond the realm of normal expectations in history, science, finance, and technology.

2) The non-computability of the probability of consequential rare events using scientific methods (owing to the very nature of small probabilities).

3) The psychological biases that blind people, both individually and collectively, to uncertainty and to the substantial role of rare events in historical affairs.

Relating to the discussion of completeness of information and its role in uncertainty, Black Swan events make what you don’t know far more relevant than what you do know. In other words, Layers 2, 3, 4, and 5 are much more important than Layer 1.

To be explicit about the concept’s relevancy to our core topic today, most Black Swan events are caused by and are exacerbated by being unexpected (a product of uncertainty).

Taleb introduces the concept he dubs the “Platonic fold”, referring to the boundary where the Platonic mindset—the desire to cut reality into crisp shapes (similar to the concept of simplification we discussed earlier)—collides with the messy, unpredictable, uncertain nature of true reality. This Platonic fold is where the gap between what you know and what you think you know becomes “dangerously wide.” In Taleb’s words, “it is here that the Black Swan is produced.”

The dichotomy between what you know and what you think you know is exhibited by the extrapolation of the past onto the future (a discussion we’ll have in Tenet #5). My favorite analogy of this is the story of the turkey (which I used in Tenet #3 and will yet again deploy here), where it goes out to eat all of its food the first 1000 days of its life. In a normal linear viewpoint (see Tenet #3), you would expect that on the 1001st day, this behavior would continue. But what if the 1001st day was Thanksgiving?

Because the modern world is exponential, it’s dominated by very rare events. It can deliver a Black Swan event (this would be the equivalent of Thanksgiving for the turkey) after thousands of normal days, which Taleb dubs “White Swans” (these would be normal eating days for the turkey).

As Taleb describes it, “Mistaking a naive observation of the past as something definitive or representative of the future is the one and only cause of our inability to understand the Black Swan.” Our story of the turkey portrays this phenomenon, showcasing how our traditionally linear extrapolations of the world (i.e., perceiving certainty when in fact there is uncertainty) can leave us drastically exposed to a Black Swan event.

Relating to our discussions of Linearland and Exponentland in Tenet #3, Taleb discusses how “our world is dominated by the extreme, the unknown, and the very improbable (improbable according to our current knowledge)—all the while we spend our time engaged in small talk, focusing on the known, and the repeated.”

By focusing on the known and the repeated—the reductions and simplifications of life we discussed above—we neglect uncertainties present, a practice which leaves us vulnerable to Black Swan events.

It’s only through delving into uncertainty that we can begin to solve the first property of Black Swan events: “First, it is an outlier, as it lies outside the realm of regular expectations, because nothing in the past can convincingly point to its possibility.”

Taleb discusses how, contrary to common notions throughout society, almost no discoveries and no technologies of note, came from design and planning (i.e., came from realms of certainty). Instead, they were just Black Swans.

These Black Swan events are unpredictable, meaning we need to adjust to their existence, rather than trying to naively predict them (we’ll discuss more in Tenet #5). In Taleb’s opinion, we need to focus on what he refers to as “antiknowledge.” Antiknowledge is the collection of things we don’t know. Focusing solely on what we know can lead to overconfidence and blindness to the possibility of Black Swan events.

Taleb uses the term “antilibrary” to describe a fictional collection of unread books, suggesting that these books represent the antiknowledge that we don’t yet have access to. To clarify, antiknowledge is not simply the opposite of knowledge; it’s a recognition of the vastness of the unknown and the potential for unexpected events to have a significant impact.

Credit National Geographic

A METHOD TO DEAL WITH SOME OF THE UNCERTAINTIES PRESENT IN OUR LIVES - WE SHOULD NOT AVOID UNCERTAINTY BUT WE SHOULDN’T EMBRACE IT - EVERY DAY THERE IS MORE UNCERTAINTY THAN THERE WAS YESTERDAY

Traditionally, humanity’s response to uncertainty has been the choice to avoid and overlook it.

As Bhide writes, “uncertainty is banished to the unexaminable, occult world of unknown unknowns.”

In the majority of cases, this strategy works well, and no extensive repercussions are felt.

However, as we’ve seen through the Black Swan effect and other examples, our tendency towards simplification and our reluctance to face facts leaves us vulnerable to the true uncertainties of the world—the ones with exponential outcomes exacerbated through cascading effects.

In brief, the way we approach uncertainties in our lives, especially the uncertainties with extenuating effects, is flawed and needs a critical update.

Given what we know about our blindspots, psychological barriers, and cognitive biases relating to uncertainties, how should we deal with the uncertainties in life?

Now, we can’t fully get rid of our uncertainty problem (there will always be irreducible uncertainties - we simply cannot know everything), but there are some mitigation factors possible for our uncertainty problem:

Conscious awareness of the uncertainties in our lives

Focus on reducible uncertainties but don’t overlook irreducible uncertainties

Adopt flexibility and adaptability in our approach

Utilize diverse perspectives and tools

Throughout Buddhism, the idea of conscious awareness is integral to the practice, a key practice for cultivating understanding and equanimity. Awareness is seen as a fundamental capacity for realizing the true nature of things and achieving enlightenment.

Awareness is critical to addressing our uncertainty predicament. Specifically, we’re trying to become aware of the fact that there are things we don’t know, and things we don’t know that we don’t know.

Awareness can help us recognize our cravings for certainty, helping us become aware of our own discomfort with ambiguity. Actively looking for biases in our own thinking like this can help us address shortcomings before they significantly impact our lives.

Practicing awareness helps us be open to the idea that we may be wrong, that our knowledge may be incomplete, or that the situation is more complex than we initially thought. We should constantly be asking, “What might I be missing here?”

Awareness can help us differentiate between the types of uncertainty: reducible and irreducible. As such, we can focus on reducible uncertainties, directing our energy towards reducing uncertainties that are knowable. Yes, we should focus on reducible uncertainties—they allow us to live our lives more easily and more efficiently.

That isn’t to say, however, that we should not overlook the irreducible uncertainties in our lives. Becoming aware of these irreducible uncertainties, even though we can’t necessarily do anything about them, is key to reducing our blindspots.

As discussed, a property of these “Black Swan” events, which come as the result of these irreducible certainties, is that they are outliers, outside the realm of regular expectations. If we see these outliers before they are exercised, their ability to sneak up on us and truly impact our lives is significantly diminished.

Similarly, as Amar Bhide writes, “experience suggests that we should avoid certitudes.” Like our tendency to avoid irreducible uncertainties, we also have a tendency to overlook our certainties in life (taking them as fact, so there is no more mental stratagem necessary to tackle that issue). These certainties pose a parallel issue, wherein we feel (since we are certain about them) that we no longer need to think about them, therefore exposing us to blindspots.

We’ll discuss the other ways to address the uncertainty in our lives in later articles: adopt flexibility and adaptability in our approach (we’ll discuss in Tenet #6) and utilize diverse perspectives and tools (we’ll discuss in Tenet #7).

Ultimately, these approaches don’t exactly solve the problem. Bhide explains this very explicitly: “I don’t believe meaningful universal rules for managing real-world uncertainties are even possible.”

The goal, as Knight purported, is to find the middle course between striving to avoid uncertainty entirely (which we’ve seen is impossible) and plunging headfirst into the darkness, which, as he puts it, is “reckless.”

To summarize, I believe this quote from Taleb explains uncertainty and our response to it perfectly:

The more I think about my subject, the more I see evidence that the world we have in our minds is different from the one playing outside. Every morning the world appears to me more random than it did the day before, and humans seem to be even more fooled by it than they were the previous day.

There’s way more to discuss here—this is only the tip of the iceberg.

Congrats, we’ve made it through Tenet #4. Hope you enjoyed it. Please give me any feedback you have—happy to clarify or elaborate further on anything discussed.

In future articles, we’re going to dive deeper into the way we go about prediction and deciphering our uncertain world, starting with Tenet #5:

Human prediction is often flawed due to biases, complexity, and blind spots.

That’s all for today. I’ll be back in your inbox on Saturday with The Saturday Morning Newsletter.

Thanks for reading,

Drew Jackson

Stay Connected

Website: brainwaves.me

Twitter: @brainwavesdotme

Email: brainwaves.me@gmail.com

Thank you for reading the Brainwaves newsletter. Please ask your friends, colleagues, and family members to sign up.

Brainwaves is a passion project educating everyone on critical topics that influence our future, key insights into the world today, and a glimpse into the past from a forward-looking lens.

To view previous editions of Brainwaves, go here.

Want to sponsor a post or advertise with us? Reach out to us via email.

Disclaimer: The views expressed here are my personal opinions and do not represent any current or former employers. This content is for informational and educational purposes only, not financial advice. Investments carry risks—please conduct thorough research and consult financial professionals before making investment decisions. Any sponsorships or endorsements are clearly disclosed and do not influence editorial content.