A Chocolatier's 100-Year Predictions Highlight Our Limitations

A Futures Thinking Perspective

👋 Hello friends,

Thank you for joining this week's edition of Brainwaves. I'm Drew Jackson, and today we're exploring:

Predictive Abilities & Limitations

Key Question: Should we always take predictions as fact? In what ways are our predictive abilities limited?

Thesis: Our ability to predict accurately is rather limited to ordinary, repetitive circumstances. Any embellishment of the fact leaves us vulnerable to biases, blind spots, or complexities of the world. Awareness of this fact and the consequences potential deviations entail is key to addressing our predictive abilities. Overall, we should take an air of overwhelming skepticism towards any and all predictions we come across.

Credit Gemini

Before we begin: Brainwaves arrives in your inbox every other Wednesday, exploring venture capital, economics, space, energy, intellectual property, philosophy, and beyond. I write as a curious explorer rather than an expert, and I value your insights and perspectives on each subject.

Time to Read: 60 minutes.

Let’s dive in!

The Owl is a very wise bird; and once, long ago, when the first oak sprouted in the forest, she called all the other Birds together and said to them, “You see this tiny tree? If you take my advice, you will destroy it now when it is small: for when it grows big, the mistletoe will appear upon it, from which birdlime will be prepared for your destruction.”

Again, when the first flax was sown, she said to them,”Go and eat up that seed, for it is the seed of the flax, out of which men will one day make nets to catch you.”

Once more, when she saw the first archer, she warned the Birds that he was their deadly enemy, who would wing his arrows with their own feathers and shoot them.

But they took no notice of what she said: in fact, they thought she was rather mad, and laughed at her.

When, however, everything turned out as she had foretold, they changed their minds and conceived a great respect for her wisdom. Hence, whenever she appears, the Birds attend upon her in the hope of hearing something that may be for their good.

She, however, gives them advice no longer, but sits moping and pondering on the folly of her kind.

- Aesop’s fable, as translated in 1912

The future actively shapes our lives. Historically, the way humans have thought about and approached the future has been flawed. Futures Thinking is a modern approach to the future, rethinking how humans think about and approach the future.

Rather than trying to predict specific future events, Futures Thinking encourages a shift in how we conceptualize the future itself—drawing on diverse cultural perspectives, foundational world characteristics, deep modern literature reviews, and recognizing that our present actions and narratives significantly influence future outcomes. Since most major life decisions are essentially bets on the future, adopting this framework could transform how we approach education, careers, relationships, and other essential aspects of life.

Today, our discussion revolves around how our world is set up and how these underlying characteristics shape everything that goes on in the world, specifically focusing on Futures Thinking Tenet #5: Cognitive limitations—biases, blind spots, and simplification—make unpredictability inevitable..

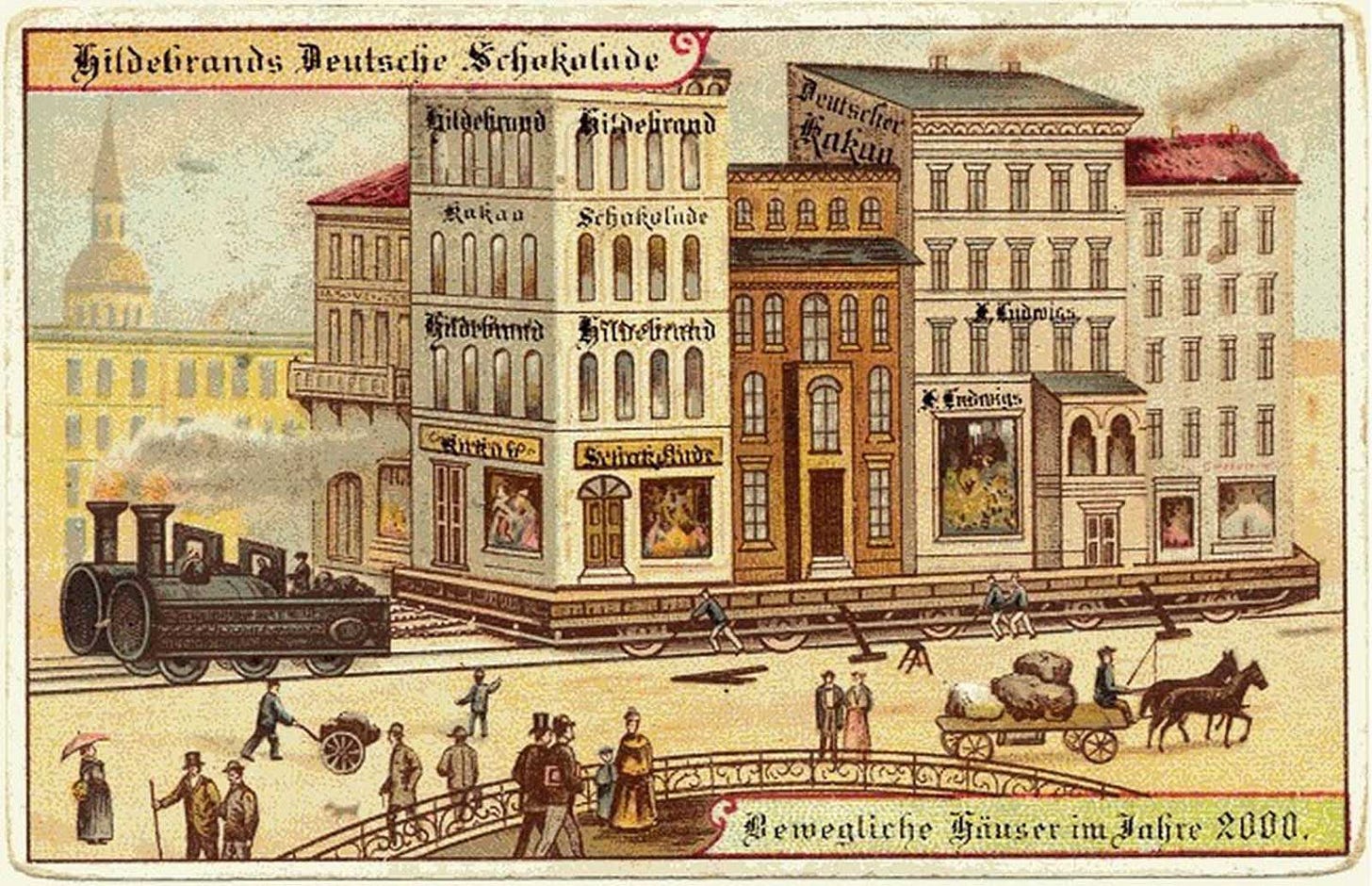

Credit Rare Historical Photos

A CHOCOLATIER’S EXPECTATIONS FOR THE NEXT 100 YEARS - ENTIRE GROUPS OF PEOPLE ARE FOCUSED ON STUDYING AND PREDICTING THE FUTURE - ARE FUTURISTS BETTER AT PREDICTING THE FUTURE THAN THE AVERAGE PERSON?

In 1817, the Theodor Hildebrand & Son chocolate factory was formed by confectioner Theodor Hildebrand in Berlin, Germany. In 1830, following the development of the steam engine and its adoption in Europe, Hildebrand adopted them for use in their factories, enabling them to offer chocolates at a lower price.

Around 80 years after it was founded, now known as Hildebrand’s, the chocolate maker undertook a clever marketing campaign (especially clever in hindsight), labeled Germany In the Year 2000.

As part of the 1900 Paris World’s Fair, the chocolate company commissioned 12 postcards to predict what life would be like 100 years in the future (In the Year 2000, hence the name). You can view the 12 images here.

Hildebrand placed these postcards in the boxes of their chocolates from 1899 to 1910, an early form of collectible items (like the McDonald’s mystery kid’s meal toys).

The associated postcards show a wide variety of predictions for the future, ranging from accurate to absurd to plausible.

Below is a list of the 12 predictions made about our lives today (some have multiple interpretations):

Personal flying machines

X-ray machines for police / x-ray surveillance devices

Personal airships

A live audiovisual broadcast of a theatre performance / watching a live performance while not in the theater

Putting a roof over a city / weather-proof city roofing

Moving an entire city block by rail / steam relocation of mobile houses

Underwater ships for tourists / tourist submarines

Riding and walking on water / strolling on a lake with the aid of balloons

Hybrid rail-water warship

Machine for creating good weather / weather-controlling machines

Excursions to the North Pole / tourism at the North Pole

Moveable sidewalks

Using hindsight bias, we can see the relative accuracy of these predictions, which over the long timespan, are unexpectedly much more accurate than you would expect of similar predictions we would make in 2000 about the year 2100.

There is an entire field dedicated to this, usually labeled under the term “futurists.” Futurists and those who participate in “futures studies” seek to study and predict possible futures through the analysis of trends, emerging technologies, societal shifts, and other drivers of change.

As Wikipedia puts it, “part of the discipline thus seeks a systematic and pattern-based understanding of past and present, and to explore the possibility of future events and trends.”

Generally, futurists focus on the medium- and long-term horizons, planning and strategizing to anticipate possible future events far into the future—specifically interested in changes of a “transformative impact, rather than those of an incremental or narrow scope.”

In the mid-1940s, the first professional “futurist” consulting institutions (RAND, SRI, etc.) began to engage in long-range planning, systemic trend watching, scenario development, and visioning. They began primarily under military and government contract during WWII, but after they began servicing private institutions and corporations.

There’s a lot to unpack here. We’ll slowly do that over the duration of this article.

To begin with, let’s start with the most damaging claim that opponents across the spectrum have made about these types of people (whether official futurists acting according to their profession or other casual predictors): They are no better than the common man at predicting the future.

One of those staunch opponents has been Nicholas Taleb through his book The Black Swan. In it, he aggressively states the following:

These “experts” were lopsided: on the occasions when they were right, they attributed it to their own depth of understanding and expertise; when wrong, it was either the situation that was to blame, since it was unusual, or, worse, they did not recognize that they were wrong and spun stories around it. They found it difficult to accept that their grasp was a little short. But this attribute is universal to all our activities: there is something in us designed to protect our self-esteem. We humans are the victims of an asymmetry in the perception of random events. We attribute our successes to our skills, and our failures to external events outside our control, namely to randomness.

In other words, Taleb claims that these “experts” in prediction take all of the credit when they are correct and don’t take credit when they are incorrect (blaming countless other things). When practiced, this creates the impression that they are better at predicting than they actually are. To use an example from a completely different discipline, this is similar to how some religious zealots frame problems and things that happen in this world: if it’s good, it belongs to God, if it’s bad, it belongs to man.

I’ve already discussed in length how our approach to randomness (and likewise uncertainty) can lead us to fall victim to our own understanding of the world, but we’ll continue elaborating on this train of thought throughout this article.

Taleb doesn’t stop there, however, casting aspersions on the quality of the possible futures predicted by these futurists (and those like them).

To be clear, futurists and those who participate in the field of “futures studies” don’t truly “predict” the future in the sense of offering a single, definitive view of the future (and if they do they are ignorantly naive). Instead, they explore a variety of possible futures to help those interested understand the range of eventualities possible.

For instance, take the example from Hildebrand’s chocolates above. They offer many potential futures, and while many of the predictions are relatively accurate to our reality in the 21st century, there are a lot of key developments missing.

As Taleb puts it,

I ask people to name three recently implemented technologies that most impact our world today, they usually propose the computer, the Internet, and the laser. All three were unplanned, unpredicted, and unappreciated upon their discovery, and remained unappreciated well after their initial use.

From Taleb’s viewpoint, and we’ll explore this more in-depth using his and other philosophers’ perspectives on the thought, many of the most significant developments throughout history simply could not be predicted (at least not far in advance and definitely not by a large number of people).

Sir Francis Bacon, in his 1620 piece Novum Organum, discussed how these most important advances are the least predictable ones, “having no affinity or parallelism with anything that is now known, but lying entirely out of the beat of the imagination, which have not yet been found out.”

As Taleb would label them, these events are “Black Swans.”

Credit Unsplash

FUTURISTS ARE THE CHIROPRACTORS OF FUTURES THINKING - HIGHLIGHTING 5 KEY ISSUES WITH OUR PREDICTIVE ABILITIES - WE ARE TRULY ONLY GOOD AT PREDICTING THE BORING

When I get bored with the present reality I find myself in, I think it’s fun to see what people are thinking tomorrow may look like. I find these stories, predictions, and narratives of the future to be very entertaining.

For instance, I recently came across this article, detailing 10 predictions of what 2035 will look like (published in 2024). To summarize, here are the 10 subjects of the predictions:

The Rise of Living Movies

The Misinformation and Verification Economy

Quantum Hegemony

A(R) New Social Paradigm

Preventive Genomics and Healthcare

The New Reality of Work

ClimateTech Goes Jetstream

Hyper-Hyper-Personalization

AGI, ASI, and Society

The Convergence of Humans and Machines

Honestly, there’s some cool stuff listed there—in many ways, you could read this and be excited about the future (often these articles don’t detail predictions of war, genocide, plague, disease, or any other negative event so the bias is quite evident).

That is… until you realize what these really are (or what they are more properly characterized as): hopes, wishes, faith—all for a “better” world than we have today. Granted, I’ve cherry picked one article out of many, but the principle holds for quite a large portion.

It’s difficult to apply hardened principles to futurists—it severely constricts their profession. A bad analogy would be that they would be considered the chiropractors of Futures Thinking (maybe that’s too far, but alas).

In the section above, I gave voice to the most damaging claim that opponents give when encountering futurists and those who make predictions about the future: They are no better than the common man at predicting the future.

In order to elaborate this claim, we must first understand the methodologies these practitioners use to predict the future. The main methods used by these practitioners are explained below:

Method #1: Trend Analysis & Extrapolation - A key way futurists predict the future is through the analysis of information to draw trends, which they then extrapolate into the future. Extrapolation is a simple form of “forecasting”.

Method #2: Forecasting - Forecasting is the process of looking from the present to the future. It involves analyzing patterns and using statistical models to estimate future outcomes.

Method #3: Backcasting - Backcasting starts by envisioning a future state, then works backward to identify the steps and policies needed to achieve that vision. In other words, you start with the end in mind, then work back to the present.

Method #4: Wildcard Consideration - Wildcards are low-probability, high-impact events that could dramatically alter the future. While these events are hard to predict, considering their potential helps build resilience.

Method #5: Scenario Planning - Scenario planning involves developing multiple plausible alternative futures, crafting detailed stories or descriptions of what these different futures might look and feel like.

Method #6: Cross-Impact Analysis - Cross-impact analysis examines how different trends and events might influence each other, leveraging interdependencies and probabilistic assessments of potential scenarios.

People skeptical of future predictions (and those who are making the predictions) argue that our capacity to foresee the future is deeply flawed, offering many explanations in an attempt to poke holes in every aspect of the argument for making such forecasts.

These explanations form the basis for our discussion today, highlighting the innate world factors, biases, complexities, and blindspots present that restrict or fully prevent our ability to predict with any accuracy.

Prediction Problem #1: We Can’t Predict Novel Futures Because Then They Would Exist in the Present

I’m sure you’re wondering about the Taleb quote I listed above, describing how the three recent biggest developments (the computer, the internet, and the laser) weren’t predicted. Is his claim legitimate? If so, what factors influence our failures to predict these major, novel futures?

It’s difficult to pinpoint the very first “prediction” of the internet, but some sources argue that there were some vague representations of an “internet-like” technology in the early 1900s. A 1879 prediction envisioned a source that provided a constant stream of news; a 1904 prediction envisioned a source that allowed the user to view events all around the world in real time; and a 1909 prediction envisioned a source that was a vast archive of information and an ability to communicate with others visually.

Fast forward a couple of decades to the early 1960s, and we see the first “concrete” prediction of the Internet by research scientists Licklider, Kleinrock, Baran, and Roberts. They envisioned a globally interconnected set of computers through which everyone could quickly and easily access data and programs from any site.

Over the next decade or two, what we now know as the Internet was formed.

Using the Internet as our core example of the three, it seems as though (in hindsight) we can draw faint lines to places throughout history where parts of the future were “predicted”, but in reality, the Internet was only competently foreseen right before it was actually invented.

The internet and its varying predictions showcase one of the fundamental issues with predicting novel, massive future developments (which Taleb would label “Black Swan” developments): the idea that if you are going to understand the future in order to predict it, you need to incorporate elements of the future itself.

Taleb uses a better, clearer example to illustrate this point:

If I expect to expect something at some date in the future, then I already expect that something at present. Consider the wheel again. If you are a Stone Age historical thinker called on to predict the future in a comprehensive report for your chief tribal planner, you must project the invention of the wheel or you will miss pretty much all of the action. Now, if you can prophesy the invention of the wheel, you already know what a wheel looks like, and thus you already know how to build a wheel, so you are already on your way. The Black Swan needs to be predicted!

Using the idea of the wheel in the Stone Age, Taleb illustrates that if you had the level of understanding of the wheel in order to “predict” it, then the “prediction” isn’t a prediction of something completely unknown, but rather a description of something you’ve already conceived.

This is what makes truly novel futures unpredictable:

1) The “Pre-Conception” Trap: For something to be truly novel and unpredictable, it must be something that, by its very nature, falls outside of our current frameworks, concepts, and imaginations. If you can describe it well enough to “predict” it, you’ve already brought it into the realm of the conceivable.

2) Lack of Precursors/Analogies: Major, novel breakthroughs often lack clear precursors or direct analogies in the existing world.

3) Emergent Properties: Truly novel developments often lead to emergent properties that cannot be foreseen by simply analyzing their constituent parts.

4) Technological Dependencies and Leapfrogging: Many breakthroughs depend on a confluence of other, often unpredicted, technological advancements.

5) The “Fuzzy Front End” of Innovation: As discussed in Tenet #3, innovation doesn’t happen in a linear fashion. The initial stages are often characterized by ambiguity, false starts, and unexpected discoveries. What seems like a clear path in hindsight was a messy, uncertain process in the present. Predicting a specific outcome in this “fuzzy front end” is exceedingly difficult because the very nature of the innovation is still being defined.

Taleb's point is that our predictive faculties are inherently limited when it comes to true novelty. If something is truly revolutionary and unprecedented, describing it well enough to "predict" it essentially means you've already conceptualized it. This act of conceptualization moves it from the realm of the truly unknown future into the present realm of invention or discovery.

This discussion relates to our discussion of uncertainties in Layer 3 and Layer 5 in Tenet #4. As Taleb writes, “Prediction requires knowing about technologies that will be discovered in the future. But that very knowledge would almost automatically allow us to start developing those technologies right away. Ergo, we do not know what we will know.”

Therefore, the "biggest developments" are often unpredictable precisely because their novelty means they couldn't exist as clear concepts in the present until they were on the verge of, or actually, being created. Our "failures" to predict them aren't failures of effort, but rather a fundamental limitation stemming from the nature of groundbreaking innovation itself.

Prediction Problem #2: The Delusion of Repetition, Why History Is a Poor Guide for the Extraordinary

This prediction problem is actually two very closely linked issues: the problems with history and the problems with the extraordinary.

To begin, people have skepticism about the use of history to predict future events. Often, those creating predictions of the future assume that the future will be similar to the past. Using language we developed in Tenet #3, these people are assuming a relatively linear view of history and the future.

As Taleb writes, “The only way you can imagine a future 'similar’ to the past is by assuming that it will be an exact projection of it, hence predictable.” In this case, Taleb is assuming a directly causal linear relationship of the past and future (a preposterous view in retrospect).

Relaxing this view slightly, Amar Bhide writes in his book Uncertainty and Enterprise: Venturing Beyond the Known that “Keynes and Knight were the first to seriously question whether patterns of the past always reveal the path to the future.” In relaxing Taleb’s assumption of the future being an exact replica of the past, Keynes and Knight allow for the introduction of exponentials into the equation, adding flexibility to our linear projection.

As such, we can propose that the assumption of repetition can be flawed. In other words, history can be a poor guide for the future. This is especially highlighted in matters of the ordinary and extraordinary.

To add some structure to our discussion, let’s differentiate between ordinary events and extraordinary events. In our case, ordinary events would refer to events that are repeated very closely to how they occurred. Extraordinary events would refer to events that are “one-offs”, events that are non-repeatable.

We discussed the idea of “one-offs” at length in Tenet #4:

The phrase “one-offs” refers to an event, situation, system, or world predicament that is unique, specific, and often non-repeatable in the exact same way.

Because a one-off is so specific and unique, it makes it difficult to apply broad generalizations or objective probabilities. In the ideology of Packy McCormick [over at Not Boring], there is no playbook that accurately and completely explains how to deal with these situations. It is here where subjective uncertainty and doubt come into play.

We, whether we like it or not, primarily learn from repetition. The example I love using to illustrate this point is the analogy of the turkey: It lives on a farm for the first 1000 days of its life without accident, eating and surviving well. If we assume this is an ordinary affair (subject to linear affairs as discussed in Tenet #3), we would assume that day 1001 would continue the same trend.

However, if this is an extraordinary affair (subject to exponential affairs as discussed in Tenet #3 and the vast uncertainty discussed in Tenet #4), we wouldn’t be certain of anything, needing to consider whether or not the next day was Thanksgiving.

Our reliance on historical data and patterns, as discussed above, while seemingly logical, is inherently biased towards predicting routine, repeatable events—the more routine the task, the better we get at predicting it.

This strength becomes our weakness when we are confronted with novel, non-routine, non-repeatable events (which Taleb labels as “Black Swans”). By assuming the future will simply be an extrapolation of the past, we systematically ignore these novel events and are then surprised when the extraordinary presents itself.

Taleb writes, “My results were that regular events can predict regular events, but that extreme events, perhaps because they are more acute when people are unprepared, are almost never predicted from narrow reliance on the past.”

On the surface, this issue is difficult, but not impossible to deal with. However, this issue gets infinitely more complicated when we consider the rather unpleasant thought I ended with in my discussion of one-offs in Tenet #4:

If you truly consider the extent of the “one-off” theory to its absolute extent, you’ll find that every single event in our world since the beginning of time to this very moment is technically a “one-off” and, as such, is subject to high levels of irreducible uncertainty.

To employ another Taleb quip, “How can we know the future, given knowledge of the past?”

Prediction Problem #3: There Is No Reliable Way to Compute Small Probabilities

In his book, Taleb states, “There is no reliable way to compute small probabilities.” This is one of the key problems he cites with our ability to predict any number of futures.

For instance, what is the probability of being able to travel at light speed in the next decade? It would be an incredibly improbable feat, but our ability to assign a direct probability to it is nearly impossible. Is it 1 in 1,000? 1 in 10,000,000? Who knows?

This is where the field of statistics begins to meet its match. Taleb characterized this as “statistical undecidability”, stating that “statistics is fundamentally incomplete as a field, as it cannot predict the risk of rare events, a problem that is acute in proportion to the rarity of these events.”

In order to be able to accurately compute a very small probability (on the scale of millions, billions, or more), you would need an enormous amount of historical data or observations. For almost every problem with which there is this small of a probability, however, there is not anywhere near the level of data necessary to calculate a probability.

As such, when you don’t have enough data to curate a prediction, you resort to theoretical models to create and estimate probabilities, but even these models aren’t without fault. They are based on countless assumptions about the underlying distribution of events, and are arguably more inaccurate.

Furthermore, recently, researchers have found that people assign different probabilities to different future states of the world, which they call “subjective probabilities.” As defined, subjective probability is a type of probability derived from an individual’s personal judgment or own experience about whether a specific outcome is likely to occur.

To clarify, Taleb doesn’t mean it’s literally impossible to assign any number to a small probability (there exists some minuscule probability for each almost impossible event), but that any such number provided as an estimate of that probability is inherently unreliable, meaningless in practice, and dangerous to act upon.

An example of this in practice would be the effect of small errors. When you are estimating a very small probability of something occurring, even tiny errors in your methodology can lead to massive errors in the final probability. For example, if the true probability is 0.0001% and you estimate it as 0.001%, you’re off by a factor of 10, potentially huge when multiplied by the potential impact of the event.

Prediction Problem #4: Vague Predictions Are Often More Helpful Than Specific Predictions

Upon initial inspection, this statement might seem to be counterintuitive. In our predictions, we desire the utmost precision to ensure accuracy and usefulness.

To illustrate this point, consider the following two predictions:

Prediction #1: The Vice President of the United States will be assassinated on domestic soil in the next year.

Prediction #2: There will be a major attempt to destabilize the United States’ political regime at some point in the next year.

If you had to choose between the two, most people would choose the first due to its clarity and simplicity.

However, there are many drawbacks to preferring specific predictions over those that may be more vague and seemingly unhelpful.

The first issue with specific predictions (like the one listed above) is that they have a much higher probability of being incorrect. A highly specific prediction has an extremely low probability of being precisely correct, as even a slight deviation makes it “wrong.” A vaguer prediction has a much higher chance of aligning with reality. It provides a general direction or trend without committing to a single, easily falsifiable point. As such, there’s more room to be right with a vague prediction.

The second issue with specific predictions is the inherent lack of flexibility and adaptability. As the future is inherently uncertain (discussed in Tenet #4), overly specific predictions can create a rigid mindset, leading organizations or individuals to pursue a single path even when new information is presented. Vague predictions, on the other hand, can allow for contingency planning and the ability to adapt as the future unfolds.

Given this information, in retrospect, which of the predictions would you now prefer?

People often prefer precise information, even when it’s less likely to be accurate, which some have called the “precision paradox.” A precise prediction feels more authoritative and insightful, but more often than not, it’s simply a distracting illusion.

As such, specific predictions are like trying to hit a tiny bullseye in the dark. You’re almost certainly going to miss. Vague predictions are like aiming at a much larger target. As Taleb puts it, we shouldn’t try to predict precise Black Swans as it tends to make us more vulnerable to the ones we did not predict.

In their book, Ikigai: The Japanese Secret to a Long and Happy Life, Garcia and Miralles employ a statement by Jeff Howe which summarizes this issue concisely,

In an increasingly unpredictable world moving ever more quickly, a detailed map may lead you deep into the woods at an unnecessarily high cost. A good compass, though, will always take you where you need to go. It doesn’t mean that you should start your journey without any idea where you’re going. What it does mean is understanding that while the path to your goal may not be straight, you’ll finish faster and more efficiently than you would have if you had trudged along a preplanned route.

Prediction Problem #5: Predictions Change Behavior When They Are Widely Known

In Jerry Neumann’s 2015 article Strategies Against Systems, he left a helpful tiny nugget buried within his conclusion paragraph:

Predictions falsify themselves when they are widely known and widely feared.

This statement describes a phenomenon dubbed by social scientists as “reflexive predictions.” A 1973 article by George D. Romanos in Philosophy of Science articulated this concept nicely:

The problem of reflexive predictions is a familiar one for social scientists. The most well known examples of such predictions probably are those which occur in political science where the publication or dissemination of predictions of future political phenomena, such as voting behavior, is frequently assessed as the very cause of these predictions’ coming out true or false.

To be clear, a reflexive prediction is one where the act of making and disseminating the prediction itself influences the outcome, with two main types: self-fulfilling prophecies and self-defeating prophecies.

A self-fulfilling prophecy is a prediction that, once made and believed by relevant parties, causes people to act in ways that make the prediction come true. An example of this could be the false rumor of a bank failing, which causes people to withdraw their money, leading to the bank’s actual collapse.

In contrast, a self-defeating prophecy is a prediction that, when widely known, prompts action to prevent the predicted outcome from happening, thus falsifying the original prediction. From the 1973 article:

Perhaps the single, most notorious instance of such a prediction, even if not the most clear cut, was the prediction of the outcome of the 1948 presidential election. Here the widespread publication of predictions that Dewey would achieve a landslide victory is commonly supposed to have been one of the most important factors leading to his ultimate defeat.

These predictions are deemed “reflexive” as they refer to a circular relationship between cause and effect, where the cause (the prediction) influences the effect (the outcome), and the effect, in turn, can alter the original cause or how it’s perceived. How?

When a prediction is widely disseminated, it enters the collective knowledge base and can become a new piece of information that agents (individuals, organizations, markets, governments) incorporate into their decision-making processes.

Reflexivity makes prediction in these subjective domains (social sciences, economics, etc.) inherently more complex than in the natural sciences. A forecaster isn’t just an observer; they are potentially an active participant in shaping the very future they are trying to predict (what some have hilariously dubbed the “Oedipus effect”). Neumann describes this phenomenon well:

There is one other circumstance, peculiar to human conduct, which stands in the way of successful social prediction and planning. Public predictions of future social developments are frequently not sustained precisely because the prediction has become a new element in the concrete situation, thus tending to change the initial course of developments. This is not true of prediction in fields which do not pertain to human conduct. Thus, the prediction of the return of Halley’s comet does not in any way influence the orbit of that comet; but, to take a concrete social example, Marx’s prediction of the progressive concentration of wealth and increasing misery of the masses did influence the very process predicted. For at least one of the consequences of socialist preaching in the nineteenth century was the spread of organization of labor, which, made conscious of its unfavorable bargaining position in cases of individual contract, organized to enjoy the advantages of collective bargaining, thus slowing up, if not eliminating, the developments which Marx had predicted.

The key is that in systems involving human agency and decision-making, knowledge of a prediction can change behavior.

Given the above 5 issues with our predictions, what types of predictions have we ruled out? Which types of predictions are still relatively valid?

Through a couple of factors, we’ve ruled out predictions of things which are ‘novel’, ‘non-routine’, ‘non-repeatable’, ‘extraordinary’, ‘one-off’, or ‘rare’. Additionally, we’ve ruled out specific predictions.

As such, what are we left with?

We’re relatively good (I’ll elaborate on the relative goodness) at predicting anything ordinary, repetitive, boring, ‘inconsequential’, or anything governed by vague predictions.

Credit Adobe

UNCERTAINTY RESTRICTS OUR ABILITY TO PREDICT ACCURATELY - DISSECTING THE TRIPLET OF INFORMATION OPACITY - WE CAN’T ACCURATELY PREDICT LAYERS 3-5 OF THE UNCERTAINTY FRAMEWORK

If your elementary or childhood education was like mine, at some point you either read or watched The Giver. No worries if you didn’t or if you’ve forgotten the story, here’s the high-level SparkNotes:

The Giver is written from the point of view of Jonas, an eleven-year-old boy living in a futuristic society that has eliminated all pain, fear, war, and hatred. There is no prejudice, since everyone looks and acts basically the same, and there is very little competition. Everyone is unfailingly polite. The society has also eliminated choice: at age twelve every member of the community is assigned a job based on his or her abilities and interests. Citizens can apply for and be assigned compatible spouses, and each couple is assigned exactly two children each. The children are born to Birthmothers, who never see them, and spend their first year in a Nurturing Center with other babies, or “newchildren,” born that year. When their children are grown, family units dissolve and adults live together with Childless Adults until they are too old to function in the society. Then they spend their last years being cared for in the House of the Old until they are finally “released” from the society. In the community, release is death, but it is never described that way; most people think that after release, flawed newchildren and joyful elderly people are welcomed into the vast expanse of Elsewhere that surrounds the communities. Citizens who break rules or fail to adapt properly to the society’s codes of behavior are also released, though in their cases it is an occasion of great shame. Everything is planned and organized so that life is as convenient and pleasant as possible.

Jonas lives with his father, a Nurturer of new children, his mother, who works at the Department of Justice, and his seven-year-old sister Lily. At the beginning of the novel, he is apprehensive about the upcoming Ceremony of Twelve, when he will be given his official Assignment as a new adult member of the community. He does not have a distinct career preference, although he enjoys volunteering at a variety of different jobs. Though he is a well-behaved citizen and a good student, Jonas is different: he has pale eyes, while most people in his community have dark eyes, and he has unusual powers of perception. Sometimes objects “change” when he looks at them. He does not know it yet, but he alone in his community can perceive flashes of color; for everyone else, the world is as devoid of color as it is of pain, hunger, and inconvenience.

At the Ceremony of Twelve, Jonas is given the highly honored Assignment of Receiver of Memory. The Receiver is the sole keeper of the community’s collective memory. When the community went over to Sameness—its painless, warless, and mostly emotionless state of tranquility and harmony—it abandoned all memories of pain, war, and emotion, but the memories cannot disappear totally. Someone must keep them so that the community can avoid making the mistakes of the past, even though no one but the Receiver can bear the pain. Jonas receives the memories of the past, good and bad, from the current Receiver, a wise old man who tells Jonas to call him the Giver.

The Giver transmits memories by placing his hands on Jonas’s bare back. The first memory he receives is of an exhilarating sled ride. As Jonas receives memories from the Giver—memories of pleasure and pain, of bright colors and extreme cold and warm sun, of excitement and terror and hunger and love—he realizes how bland and empty life in his community really is. The memories make Jonas’s life richer and more meaningful, and he wishes that he could give that richness and meaning to the people he loves. But in exchange for their peaceful existence, the people of Jonas’s community have lost the capacity to love him back or to feel deep passion about anything. Since they have never experienced real suffering, they also cannot appreciate the real joy of life, and the life of individual people seems less precious to them. In addition, no one in Jonas’s community has ever made a choice of his or her own. Jonas grows more and more frustrated with the members of his community, and the Giver, who has felt the same way for many years, encourages him. The two grow very close, like a grandfather and a grandchild might have in the days before Sameness, when family members stayed in contact long after their children were grown.

Meanwhile, Jonas is helping his family take care of a problem newchild, Gabriel, who has trouble sleeping through the night at the Nurturing Center. Jonas helps the child to sleep by transmitting soothing memories to him every night, and he begins to develop a relationship with Gabriel that mirrors the family relationships he has experienced through the memories. When Gabriel is in danger of being released, the Giver reveals to Jonas that release is the same as death. Jonas’s rage and horror at this revelation inspire the Giver to help Jonas devise a plan to change things in the community forever. The Giver tells Jonas about the girl who had been designated the new Receiver ten years before. She had been the Giver’s own daughter, but the sadness of some of the memories had been too much for her and she had asked to be released. When she died, all of the memories she had accumulated were released into the community, and the community members could not handle the sudden influx of emotion and sensation. The Giver and Jonas plan for Jonas to escape the community and to actually enter Elsewhere. Once he has done that, his larger supply of memories will disperse, and the Giver will help the community to come to terms with the new feelings and thoughts, changing the society forever.

However, Jonas is forced to leave earlier than planned when his father tells him that Gabriel will be released the next day. Desperate to save Gabriel, Jonas steals his father’s bicycle and a supply of food and sets off for Elsewhere. Gradually, he enters a landscape full of color, animals, and changing weather, but also hunger, danger, and exhaustion. Avoiding search planes, Jonas and Gabriel travel for a long time until heavy snow makes bike travel impossible. Half-frozen, but comforting Gabriel with memories of sunshine and friendship, Jonas mounts a high hill. There he finds a sled—the sled from his first transmitted memory—waiting for him at the top. Jonas and Gabriel experience a glorious downhill ride on the sled. Ahead of them, they see—or think they see—the twinkling lights of a friendly village at Christmas, and they hear music. Jonas is sure that someone is waiting for them there.

It’s a captivating story, full of despair, ignorance, hope, struggle, color (and lack thereof), and much more—I would recommend a full read or a watch.

The society portrayed in The Giver is an excellent juxtaposition to the “real world” we live in today in one key aspect: the realm of uncertainty.

You may have missed the significance in your hasty read of the summary, so I would recommend going back and rereading the first paragraph particularly. Specifically, there are 3 main ways in which uncertainty is highly limited in this society: “sameness”, assigned roles, and memory control.

Almost everything (as much as possible) is the same within this community; the community leaders have eliminated or significantly suppressed all choice, emotion, pain, weather, landscape fluctuations, and other key differences (racial, emotional, and even color).

At age 12, children are “assigned” their lifelong professions (similar to how it is portrayed in the Divergent series). Additionally, any partners and children are also “assigned” to them. Both of these factors remove a large amount of the uncertainty present in their futures.

Lastly, all memories of pain, historical struggles, and even intense joy are held by one person, the Receiver (later known as the Giver), so the rest of the community lives in a state of naive tranquility.

We should be glad our world isn’t like this. If it were, we too would set off for Elsewhere, in the pursuit of a land wherein there was a larger amount of uncertainty.

Splicing this story into our concept at hand, we’re simultaneously blessed and cursed by the amount of uncertainty present in our world. To begin, a key factor in preventing our ability to accurately predict the world around us is also a beneficial property of it: uncertainty.

Jerry Neumann, the curator and proponent of the layers of uncertainty framework I harped on during Tenet #4, continues to provide key points to our topic at hand today, writing in his article Strategy Under Uncertainty:

Uncertainty means being unable to predict the future.

Granted, he does say this within a business context, but the concept still holds strong: the presence of uncertainty within an issue prevents us from accurately predicting anything to do with that issue.

Compared to the more targeted predictive issues noted above, this is a much broader position of opposition.

To begin, let’s start with the issue of information, its quantity, and relevance in relation to uncertainty and predictions.

The relationship between information and prediction is fundamental: information is the raw material for prediction, and its quantity and relevance directly influence our ability to reduce uncertainty and, consequently, to make more accurate and confident forecasts.

When all of the information is present to make a decision, the resulting predictions will be more likely to be accurate than those predictions made with less and less information. As such, the quantity of problems that arise with our predictive abilities exponentially rises as the incompleteness of information increases.

Susan Cain broaches this issue from a practical point of view in her book Quiet: The Power of Introverts in a World That Can’t Stop Talking:

The essence of the [Harvard Business School] education is that leaders have to act confidently and make decisions in the face of incomplete information. The teaching method plays with an age-old question: if you don’t have all the facts—and often you won’t—should you wait to act until you’ve collected as much data as possible? Or, by hesitating, do you risk losing others’ trust and your own momentum? The answer isn’t obvious. If you speak firmly on the basis of bad information, you can lead your people into disaster. But if you exude uncertainty, then morale suffers, funders won’t invest, and your organization can collapse.

It’s difficult to derive the answer. Amar Bhide introduces this concept by stating, “Confronting uncertain options requires some awareness of what we don’t know.” What do we need to be aware of?

In this case, it would be the extent of what Taleb has labeled the “triplet of opacity”:

The human mind suffers from three ailments as it comes into contact with history, what I call the triplet of opacity. They are: A) the illusion of understanding, or how everyone thinks he knows what is going on in a world that is more complicated (or random) than they realize. B) the retrospective distortion, or how we can assess matters only after the fact, as if they were in a rearview mirror (history seems clearer and more organized in history books than in empirical reality). C) the overvaluation of factual information and the handicap of authoritative and learned people, particularly when they create categories—when they “Platonify”.

Diving deeper into this triplet, each portion closely affects our abilities to predict.

Firstly, the illusion of understanding refers to our innate tendency to believe we grasp the world’s complexities more thoroughly than we actually do. In other words, we’re succumbing to the pathology of thinking that the world we live in is more understandable, more explainable, and therefore more predictable than it actually is.

Our discussions of Tenets #1, #2, and #3 asserted this fact. This portion manifests itself in the following ways (which we’ll discuss later on in this article):

The Narrative Fallacy

Confirmation Bias

Hindsight Bias

Ignorance of the Unknowns

This illusion is dangerous for our predictions because if you think you understand a system perfectly, you won’t seek out new information, challenge your assumptions, or prepare for unexpected outcomes. When the world eventually deviates from your simplified model, it leaves you open to blind spots and tricky situations.

The second portion of this triplet is the retrospective distortion. This biased viewpoint describes how history, when viewed in retrospect, appears far more orderly, logical, and predictable that it actually was in real-time. We’ll discuss this much further in our bias section below.

The third portion of this triplet is the overvaluation of factual information. This refers to the dangers of relying too heavily on overly precise, categorized, or expert-driven knowledge, especially when it attempts to force complex reality into neat, rigid mental “boxes”. Again, we discussed this deeply in Tenet #4, how our simplification, while seeming to help in the short run, only hurts us in the long run. This portion manifests itself in the following ways (which we’ll discuss later on in this article):

Over-Reliance on “Facts”

Categorization and Reductionism

The “Expert Blind Spot”

Ignoring Unknown Unknowns

This overvaluation exposes us to the problem of the “tunnel vision” effect (again, we’ll talk more in depth about this later on in this article).

As illustrated, this triplet of opacity is just one way in which information affects uncertainty and prediction. In our discussion of uncertainty in Tenet #4, we highlighted the importance of information throughout the layers of uncertainty:

The concept of reducible uncertainty, also known as “epistemic uncertainty,” refers to Layers 1-3, which arise from a lack of knowledge or information about a system or part of the world. It’s the uncertainty that we could reduce by collecting more data, conducting more research, improving our models, or refining our understanding.

The concept of irreducible uncertainty, also known as “aleatoric uncertainty,” refers to Layers 4-5, which arise from the inherent randomness or variability within the world. It’s the uncertainty that cannot be eliminated, no matter how much data you collect or how much you refine your models, because it’s a fundamental property of the system.

The uncertainty angle helps provide depth and clarity to the above discussion of prediction, especially once we add in the specific layers from the uncertainty framework we discussed in Tenet #4:

In Layer 1, the future is relatively clear, often because we possess a high quantity of highly relevant, reliable, and timely information. This information allows us to identify stable trends, understand cause-and-effect relationships, and project forward with a relatively high degree of confidence.

In Layer 2, there are many alternative futures. Here we have some information, but it’s incomplete or ambiguous, leading to a limited set of distinct possible outcomes. The information to make predictions exists, but we don’t personally have it. The goal within this layer is to collect more targeted information to determine which of these alternatives is most likely.

In Layer 3, there is a wide range of potential futures. Unfortunately, our information is insufficient to narrow them down into discrete and measurable alternatives. The relevance and quantity of available information are low; collecting more information helps us understand the boundaries of this range.

In Layer 4, we have reached a state of true ambiguity (fundamental unpredictability). Even with vast amounts of information, the future remains fundamentally ambiguous due to inherent randomness, complexity, or novel emergent properties of the world (discussed in Tenet #1).

In Layer 5, we are in a realm of true chaos (complete unpredictability). In truly chaotic systems, even tiny variations in initial conditions (which we can never perfectly measure or know, no matter how much information we gather) lead to vastly different outcomes.

Overall, information is crucial for prediction, but its effectiveness depends entirely on the nature of the uncertainty. For reducible uncertainties, more information is the key to better predictions. For irreducible uncertainties, even perfect information won’t yield precise predictions.

The above discussions of uncertainty layers and the triplet of opacity have helped develop the notion that uncertainty present in the world restricts our abilities to accurately predict future events.

One more perspective is necessary to fully define exactly which parts of the world we’re able to properly predict and which have restrictions.

Jerry Neumann’s 2020 article, Productive Uncertainty, provides an adequate outline for this discussion. I’ll interlay them with the uncertainty layers we’ve discussed here and throughout Tenet #4:

Neumann starts by differentiating between novelty uncertainty and complexity uncertainty. Novelty uncertainty pertains to Layer 3 uncertainty, and complexity uncertainty pertains to Layer 4 and 5 uncertainty.

As we defined, novelty uncertainty is when there are things you just don’t know, even after doing all of your research and thinking things through to their logical conclusions. It’s especially common when someone does something for the first time—hence the employment of the word novelty.

When something hasn’t been done before, often no one can predict the outcome (with any abnormal accuracy that is). As Neumann writes, “Prediction relies on either inductive or deductive reasoning: the first requires data and the second requires an understanding of the process that produces the result. Novelty uncertainty results when we have neither.”

As we defined, complexity uncertainty is unknown unknowns, possibilities we cannot imagine, as well as known unknowns.

It’s impossible to predict what many complex systems will do due to their interconnections and interdependencies. Information opacity and feedback loops make outcomes impossible to predict.

In either case, novelty or complexity, we face prediction limitations:

Novelty: When you can’t predict something because no one has done it before.

Complexity: When you can’t predict something because the system you are in is changing in an unpredictable way.

Through this, we’ve proved that those matters which fall into Layers 3-5 of our uncertainty framework cannot, with any accuracy more than lucky one-offs, be predicted.

Ultimately, I agree with Amar Bhide on this issue: “Uncertainty fascinates and challenges. An entirely predictable existence would be unbearably dull.” I would much rather live in our society than the society portrayed in The Giver.

Credit CBS 42

WE WANT TO BELIEVE THE STORY THAT BIRDS AREN’T REAL - HUMANS STRIVE TO CURATE NARRATIVES IN EVERYTHING WE DO - NARRATIVES BLIND US TO THE WORLD AROUND US, COMPROMISING OUR PREDICTIVE ABILITIES

I love a good conspiracy theory; some are truly believable (scarily so) and others are… less than believable.

According to a 2017 young man named Peter McIndoe, writing a poster after seeing pro-Trump counter-protestors at the 2017 Women’s March in Tennessee, birds aren’t real.

What followed was a large-scale satirical conspiracy theory detailing the extent to which the birds that exist today are fake (i.e., not real). Don’t you love it!

The movement claims that in the United States, all birds were exterminated by the federal government between 1959 and 1971 and replaced by lookalike drones. These drones are used by the government to spy on citizens. The movement claims that birds sit on power lines to recharge themselves, that birds defecate on cars to track them, and, astonishingly, that President Kennedy was assassinated by the government because he was reluctant to pursue the mass killing of the birds.

Doesn’t that explain everything you’ve ever wondered about the world? Can’t you see the truth?

Whether you believe in the conspiracy or not, the story is captivating. Even if you’re a delusional skeptic, you considered it for a second. We may never know the exact truth—are birds real or are they government drones?

Taleb suggests that through the study of uncertainty present in our world and our reactions to it, we can begin to see biases, complexities, and blind spots in our abilities to see and ultimately predict the world.

To begin, we must start with what Taleb calls the “narrative fallacy.” On a simple scale, this fallacy speaks to a fundamental human cognitive bias: our innate desire to create coherent, simple stories to explain complex, random, and often unpredictable events.

There are multiple parts that make up the overarching narrative fallacy:

Our craving for stories and narratives

Simplification of the world around us

The illusion of understanding

Hindsight bias and retrospective predictability

Ignoring randomness and “silent evidence”

Human minds are wired to seek patterns, cause-and-effect relationships, and meaning in the world around us. When faced with a sequence of facts or events, our minds automatically try to connect them into a narrative, even if those connections are not truly logical or accurate. We want to be told stories; this is why humans tell stories to their kids, and this is what fascinates us with fiction. As Taleb writes:

We love the tangible, the confirmation, the palpable, the real, the visible, the concrete, the known, the seen, the vivid, the visual, the social, the embedded, the emotionally laden, the salient, the stereotypical, the moving, the theatrical, the romanced, the cosmetic, the official, the scholarly-sounding verbiage, the pompous Gaussian economist, the mathematicized crap, the pomp, the Academie Franscaise, Harvard Business School, the Nobel Prize, dark business suits with white shirts and Ferragamo ties, the moving discourse, and the lurid. Most of all we favor the narrated.

As discussed in Tenet #4, we are constantly engaged in simplifying life around us. We do this to ignore or downplay sources of uncertainty that are too distant, too complex, or too slow-moving to impact our immediate decision-making.

To assist in our creation of these narratives, we employ these simplification techniques, helping to reduce the vast amounts of information and uncertainty around us into a digestible storyline. As Taleb writes, “Our minds are wonderful explanation machines, capable of making sense out of almost anything.” This simplification, as discussed in Tenet #3, often includes imposing a linear, causal structure where none truly exists, leaving us vulnerable to exponential outcomes.

This is where the illusion of understanding comes into play. Once we construct a compelling story, we tend to believe it to be true (or more accurately, a true representation of the reality around us)—even if it’s based on incomplete information, hindsight bias, or pure speculation.

To quote what I wrote in a section above:

Firstly, the illusion of understanding refers to our innate tendency to believe we grasp the world’s complexities more thoroughly than we actually do. In other words, we’re succumbing to the pathology of thinking that the world we live in is more understandable, more explainable, and therefore more predictable than it actually is.

To bring us back to the topic at hand, this illusion of understanding makes us feel more confident in our ability to predict future events, leading to a dangerous overestimation of our knowledge.

This situation is also known as epistemic arrogance, the concept that refers to an exaggerated sense of one’s own knowledge or understanding, often leading to a dismissal of alternative perspectives and a lack of openness to learning from others. In brief, it’s when we overestimate what we know and underestimate uncertainty, when we think “narrowly.”

Bringing us back to our core discussion here, Taleb states, “narrativity causes us to see past events as more predictable, more expected, and less random than they actually were.”

The narrative fallacy exposes our vulnerability to overinterpretation and our predilection for stories over raw truths. As Taleb writes, “it takes considerable effort to see facts (and remember them) while withholding judgment and resisting explanations.” It’s difficult for humans to look at the facts without trying to weave an explanation through them.

The narrative fallacy is closely linked to the confirmation bias and the hindsight bias.

Confirmation bias is when we tend to seek out, interpret, and remember information in a way that confirms our existing beliefs or our constructed narratives. Per Wikipedia, “people display this bias when they select information that supports their views, ignoring contrary information or when they interpret ambiguous evidence as supporting their existing attitudes.”

A 2016 article by Ron, Oren, and Dar cited a 1993 article by Friedrich, stating, “Confirmation bias is the tendency to make predictions and examine them by searching for information that is expected to confirm anticipations or desirable beliefs, avoiding the collection of potential refuting evidence.” In other words, we see evidence we want to see, evidence that conforms to our narrative of the world.

Hindsight bias is the tendency for people to perceive past events as having been more predictable than they were. After an event has occurred, people often believe that they could have predicted it or perhaps even “known” what the outcome of the event would be before it occurred.

Taleb describes the hindsight bias as such:

History, I will repeat, is any succession of events seen with the effect of posteriority. This bias extends to the ascription of factors in the success of ideas and religions, to the illusion of skill in many professions, to success in artistic occupations, to the nature versus nurture debate, to mistakes in using evidence in the court of law, to illusions about the “logic” of history—and of course, most severely, in our perception of the nature of extreme events.

Hindsight bias leads people to overestimate how well they could have predicted an event before it happened. It can make people forget that outcomes were uncertain at the time and fail to appreciate the complexity of the situation.

By focusing on neat narratives and our simplified view of the world, we often fail to account for the immense role of randomness and “silent evidence.”

In our discussions of futurists, I voiced Taleb’s concerns about our perceptions of randomness and their effects on our abilities to predict. In summary, he accused these people of attributing success to their own abilities and failures to things outside of their control.

To add a layer to his claims, Taleb specifically states, “We humans are the victims of an asymmetry in the perception of random events. We attribute our successes to our skills, and our failures to external events outside our control, namely to randomness.”

In practicality, this is often called the self-serving bias. Whether we like it or not, we have a built-in mechanism to protect our ego and self-esteem. When good things happen, we’re quick to take credit, attributing our success to our skills, intelligence, hard work, or deep understanding. Inversely, when things go wrong, we tend to externalize the blame, pointing to external factors outside our control.

Taleb agrees with this built-in theory, claiming that “we humans are the victims of an asymmetry in the perception of random events.”

This phrase, specifically highlighting the victimology, properly encapsulates this bias. We don’t perceive success and failure symmetrically. While this approach can be beneficial for our mental well-being in many ways, it dramatically affects our ability to predict.

Specifically, this presents itself in four unique ways:

Distorted Learning from Experience: When we succeed, we think it’s us. When we fail, we think it’s something other than us. This dramatically distorts our abilities to learn from our experiences.

Overconfidence and Unrealistic Expectations: By constantly taking credit for successes and deflecting blame for failures, we develop an inflated sense of our own predictive capabilities.

Resistance to Feedback and Learning: If you consistently attribute failures to external factors, you’ll be less open to constructive criticism or feedback (the growth mindset).

Poor Decision-Making: The self-serving bias compounds our unrealistic self-assessments, which can lead to suboptimal decisions (and likewise, suboptimal predictions).

As such, the immense role of randomness and our perception of it, is incredibly impactful on the narratives that we tell ourselves, and likewise, impactful on our ability to predict and learn from our predictions (via confirmation and hindsight biases).

Additionally, “silent evidence,” as those versed in this world call it, is incredibly impactful on the narratives we tell ourselves. To define the term, silent evidence refers to information or evidence that is overlooked, ignored, or not readily available.

Taleb uses a vivid example to illustrate his point:

The fund-management industry claims that some people are extremely skilled, since year after year they have outperformed the market. They will identify these “geniuses” and convince you of their abilities. My approach has been to manufacture cohorts of purely random investors and, by simple computer simulation, show how it would be impossible to not have these geniuses produced just by luck. Every year you fire the losers, leaving only the winners, and thus ending up with long-term steady winners. Since you do not observe the cemetery of failed investors, you will think that it is a good business, and that some operators are considerably better than others. Of course an explanation will be readily provided for the success of the lucky survivors: “He eats tofu,” “She works late; just the other day I called her office at 8 PM….” Or of course, “She is naturally lazy. People with that type of laziness can see things clearly.”

The main way we see silent evidence surface in the world is through the disregard of unsuccessful outcomes while magnifying successful outcomes, as witnessed in the example above.

Taleb gives another example to illustrate the opposite:

Does crime pay? Newspapers report on the criminals who get caught. There is no section in The New York Times recording the stories of those who committed crimes but have not been caught. So it is with cases of tax evasion, government bribes, prostitution rings, poisoning of wealthy spouses (with substances that do not have a name and cannot be detected), and drug trafficking. In addition, our representation of the standard criminal might be based on the properties of those less intelligent ones who were caught.

In this example, we see the opposite of the example above: silent evidence is the successful outcomes (the criminals who weren’t caught), while we’re magnifying the unsuccessful outcomes (the criminals who were caught).

Throughout our narratives and daily realities we live in, we see the obvious, that which is directly in front of us (these things are confirming our views of the world—confirmation bias). We fail to see, overlook, or simply disregard the invisible and less obvious facts—yet those generally are the more meaningful events (we’re entering into Black Swan territory here).

Where this affects our predictive abilities is that, like Taleb’s accusations of futurists failing to take responsibility for failed predictions, the silent evidence bias lowers our perception of the events of the past—it’s what events use to conceal their randomness.

For instance, if we were to survive a significant crisis, the silent evidence bias would lower our perception of the risk we’ve incurred, retrospectively underestimating how risky the situation actually was. In other words, specifically Taleb’s, “Do not compute odds from the vantage point of the winning gambler… but from all those who started in the cohort.”

Unfortunately, as we’ve seen through the presence of Black Swan events, the silent evidence bias hides best when it has the largest impact. As Taleb writes, “the severely victimized are likely to be eliminated from the evidence.”

Taleb leaves us with the final word on silent evidence: “Once we seep ourselves into the notion of silent evidence, so many things around us that were previously hidden start manifesting themselves.”

To reiterate and summarize the issues of randomness and silent evidence in regard to the narrative fallacy, through our strict focus on narratives and stories and our failure to account for the immense role of randomness and silent evidence, we create a skewed perception of reality.

Taleb offers a concluding remark on the narrative fallacy:

Now, I do not disagree with those recommending the use of a narrative to get attention. Indeed, our consciousness may be linked to our ability to concoct some form of story about ourselves. It is just that narrative can be lethal when used in the wrong places.

Credit Medium

NEVER BRING GYM TRAINING TO A STREET FIGHT - ADDRESSING KEY BIASES, COMPLEXITIES, AND BLIND SPOTS PRESENT IN OUR PREDICTIVE ABILITIES - WE CAN’T ESTIMATE UNKNOWN EVENTS, BUT WE CAN ESTIMATE THEIR IMPACT

Have you ever seen one of those side-by-side pictures online which portrays two people, one is usually some kind of smaller renowned professional fighter and the other a huge muscular body builder, and has some sort of caption like “It’s hard to explain but the guy on the left would defeat the guy on the right in a fight”?

If not, here’s one below:

Credit Reddit

This is a funny example (in an abstract way) of one of our predictive fallacies (the Ludic fallacy, which we’ll discuss below).

Often, when thinking about defending against and attacking other people, fighters often choose highly organized competitive fighting styles (Karate, Boxing, Krav Maga, Judo, etc.). These practices train athletes to excel within a specific set of rules, techniques, and permissible moves. Fighters trained in this manner focus on optimizing for the known combat scenarios.

However, this can contradict one of the most basic properties of real combat: real-life combat or street fighting has no rules. It’s due to this that one of the most basic laws of self-defense was coined: fight in any way necessary to protect yourself.

Opponents can use dirty tricks, surprise weapons (never bring a knife to a gun fight), or attack in ways that are completely “illegal” in the formal “game” of fighting.

Unpreparedness for unforeseen tactics or weapons can lead to defeat or death, even for highly skilled individuals who mistake their training for reality. In this case, our predictions of what lie ahead can literally leave us vulnerable to what is to come.

Besides the ever-present, overarching narrative fallacy and the triplet of opacity discussed previously, there are a multitude of other biases, complexities, and blind spots that compromise our abilities to predict. The main 7 are below:

The Ludic Fallacy

Tunneling

Framing

The Role of Luck

Ideas Are Sticky

Misrepresenting Past Predictions

Ignorance of Our Prediction Accuracy & Timeframe

Ignorance of the Unknowns

1) The Ludic Fallacy

The ludic fallacy, as it was dubbed by Taleb, refers to the misuse of games to model real-life situations. To paraphrase, the ludic fallacy is essentially the improper employment of structured games to understand and predict real-world situations.

To set up the situation at play, games have known rules and probabilities. For instance, the rules of blackjack are fixed and transparent. The probability of a certain card being drawn can be precisely calculated (ever watch a live-streamed tournament with the real-time stats?).

Unlike games, real life has unknown rules and probabilities. Real-world phenomena—especially those involving matters governed by Exponentland (as defined in Tenet #3)—do not operate with such clear-cut rules or observable probabilities.

There are other factors at play here:

Unknown unknowns (Layer 5 uncertainty as discussed in Tenet #4): In real life, we don’t know all the possible variables, interactions, or even the underlying rules of the game. We can’t list all potential outcomes, let alone assign precise probabilities to them.

Silent evidence (as discussed above): We often miss crucial information—the things that didn’t happen, the variables we didn’t consider, or the non-linear effects that we struggle to grasp (as discussed in Tenet #3).

Non-stationary factors (Layer 4 uncertainty as discussed in Tenet #4): The “rules” of the real world (whatever they may be at any time) can change over time. What was true yesterday might not be true today.

This fallacy leaves us vulnerable to many predictive issues. To characterize the obvious, an over-reliance on simplified and/or mass-adopted models (whether the Gaussian bell curve discussed in Tenet #3 or other Linearland properties) can fail when we face extraneous inputs, those governed by exponential or non-linear factors.

Additionally, this fallacy leads us to underestimate risk (similar to how we retroactively underestimated risk in the “silent evidence” example above), have a false sense of security, and engage in tunneling.

2) Tunneling

To dive slightly further into the tunneling effect, the ludic fallacy reinforces the tunneling effect. The tunneling effect refers here to the fact that we focus only on known variables and ignore “unknown unknowns” (Layer 5 uncertainties), blinding us to truly novel and impactful events.

Tunneling is our natural human tendency to focus on a limited, simplified, often pre-defined set of known variables and potential outcomes when trying to understand or predict the future, while systematically ignoring or overlooking everything else (here connecting the Ludic fallacy and the Narrative fallacy).

By ignoring those irreducible uncertainties, we behave as though Black Swan events don’t exist, when, in fact, they do—leaving us blinded for when they eventually appear.

3) Framing

Framing is a way of presenting choices, decisions, and options. Amar Bhide illustrates this principle in a lovely way:

In the first framing, K-T offered students a choice between two public health programs to deal with an epidemic threatening six hundred lives: one program would save two hundred lives; the other had a one-third chance of saving all six hundred lives and a two-thirds chance of saving none. Presented thus, 72% were risk averse—they preferred the program that would save two hundred lives for sure, although the “probability-weighted” value of the other option was the same, namely two hundred survivors. In a second version, subjects were told that one program would result in four hundred lives lost, while the other had a two-thirds chance of six hundred lives lost and a one-third chance of no deaths. Now 78% favored risk-taking: the certain death of four hundred was less acceptable than a two-thirds chance of six hundred casualties. Yet the two problems were “effectively identical.” The difference was just in the framing, as lives were saved in the first and lives were lost in the second.

If you aren’t familiar with the term or didn’t grasp its meaning through the example provided above, the framing effect is when our decisions are influenced by the way information is presented. As you’ve seen through the example, there are many ways in which equivalent information can be more or less attractive depending on what features are highlighted.

This effect can be present when all the necessary information is present or when you have no information—decisions based on framing are made by focusing on the way the information is presented instead of the information itself, so, in theory, it could be present in every single decision, prediction, etc.

To simplify, how information is presented matters. When evaluating information to make a prediction or when evaluating a prediction already made, the framing of the outcome can affect how accurate we perceive it to be. A 2023 Cambridge paper by Saiwing Yeung found that the framing of predictions, especially the directionality of these predictions (whether it was a prediction of success or failure), might influence how people evaluate the accuracy of the predictions.

4) The Role of Luck

Luck and prediction are distinct concepts, though they are very intertwined. Prediction, as we’ve discussed at length today, is the act of forecasting future events based on analysis, experience, or other factors.

Luck, on the other hand, is generally associated with unexpected events that occur beyond one’s control. Whether we notice it or not, we humans often underestimate the role of luck in every aspect of our lives.

Where luck and prediction interact is an interesting case study. For instance, unexpected events (whether positive, negative, or neutral) can and do affect the accuracy of predictions.

Using our framework from Tenet #3, in matters governed by Linearland, traditional predictive methods have much validity. Luck still plays a role, but its effects tend to average out over a large number of observations. In contrast, for matters governed by Exponentland, we’ve seen that our abilities to predict are largely futile because the significant events (those one-off, non-repeated, novel events) are precisely the ones that were unpredictable. Here, luck becomes the dominant factor in determining outcomes, far more impactful than skill or meticulous planning.

5) Ideas Are Sticky

The theory that our ideas are sticky is very similar to the concept of tunneling. To call our ideas sticky is to personify them, providing the metaphor that they, once put into our head (through any number of means), continue to stick in there even if we’re presented with evidence that proves otherwise.

It’s similar to the concept of a stubborn person; they are unreasonably persistent or unyielding in their opinions or actions, refusing to change their mind or behavior despite attempts to persuade them otherwise.

The core concept here is a combination of belief perseverance and confirmation bias. Once we form a belief or a theory (or a prediction) we tend to cling to it, even in the face of contradictory evidence. Our brains dislike cognitive dissonance—the discomfort of holding conflicting ideas. To avoid this, we often dismiss, reinterpret, or simply ignore information that challenges our existing views.

Taleb adds some advice to this idea, stating, “The problem is that our ideas are sticky: once we produce a theory, we are not likely to change our minds—so those who delay developing their theories are better off.”

What he means by delaying in developing theories is that we should avoid premature certainty; jumping to conclusions or solidifying a theory too early can be detrimental. In this case, we should maintain intellectual flexibility, learn from negative evidence, and adapt to changing information.

6) Misrepresenting Past Predictions

The past, present, and future are some of the most complicated entities. We’ll discuss them much more in depth during Tenet #10, but here’s a small appetizer.

There’s a powerful blind spot in our predictive minds, one relating to the past, present, and future. When we think of tomorrow and subsequently try to predict what it will look like, we do so blindly.

We don’t frame these predictions in terms of what we thought about today yesterday (or what we thought about yesterday on the day before yesterday). In other words, we don’t factor in previous predictions (and their accuracy and what we can learn from them) into our future predictions.

In essence, we fail to learn recursively from past experiences, and this failure continues to blind us in our future experiences. We don’t learn from history; we are bound to repeat it.

7) Ignorance of Our Prediction Accuracy & Timeframe

When someone makes a prediction, how do we know how accurate that prediction is?